There is a specific kind of student I have watched for ten years. They are fast. They retrieve facts with confidence. They format their work correctly. They produce, on demand, the answers that the rubric rewards. And then — sometime in the second or third year of a professional life — they freeze.

Not because the problem is too hard. Because they don’t know what the problem is.

This is the educational catastrophe hiding in plain sight. We spent twelve years teaching a generation to be slower, more expensive versions of machines that now fit in their pockets. The machines arrived. The students were not prepared for what the machines’ arrival actually required. Not obsolescence — its opposite. The arrival of machines that are superhuman at calculation, retrieval, and pattern recognition should have freed humans to concentrate on what machines cannot do. Instead, we have a curriculum that taught humans to do what machines do, and we are watching, in slow motion, the consequences.

I’ve been writing about compuational doubt at Skepticism.ai. But this argument — the specific argument about the mismatch between what we teach and what intelligence actually requires — felt large enough to deserve its own space. That’s why I started Theorist.ai. Not a rebrand. A dedicated home for the question of what education owes the next generation of thinkers, at the precise moment when machines have become genuinely good at answering questions and genuinely poor at knowing which questions are worth asking.

This is a first pass. The taxonomy below is a draft. Pushback is the point.

The Mistake Was Not Malicious

Before the indictment, the defense — because it is fair, and because understanding the mistake is the only way to correct it.

The curriculum was built for a world in which arithmetic speed and fact retrieval were genuinely valuable human capacities. The memorization of the periodic table, the drilling of multiplication facts, the emphasis on syntactic correctness in composition — these were not arbitrary. They were the skills that industrial economies required and that the available pedagogical tools could measure. The standardized test was not a conspiracy. It was an attempt to measure, at scale, the skills that mattered at scale for the economy that existed.

The economy changed. The tools changed. The curriculum did not.

This is the kind of mistake that is easy to make and extremely hard to correct, because the people who built the curriculum were not wrong — they were right for a world that no longer exists, and the institutional inertia that preserved their work is not stupidity, it is the normal lag between a changed environment and a changed response. Schools change slowly because they were built to transmit what is known, not to respond to what is new.

That feature is now a bug.

We are training humans to compete on the machine’s home turf.

What the Machine Does Better

The intelligent response to a forklift is not to practice lifting heavier objects. Let me be specific about what we’re dealing with.

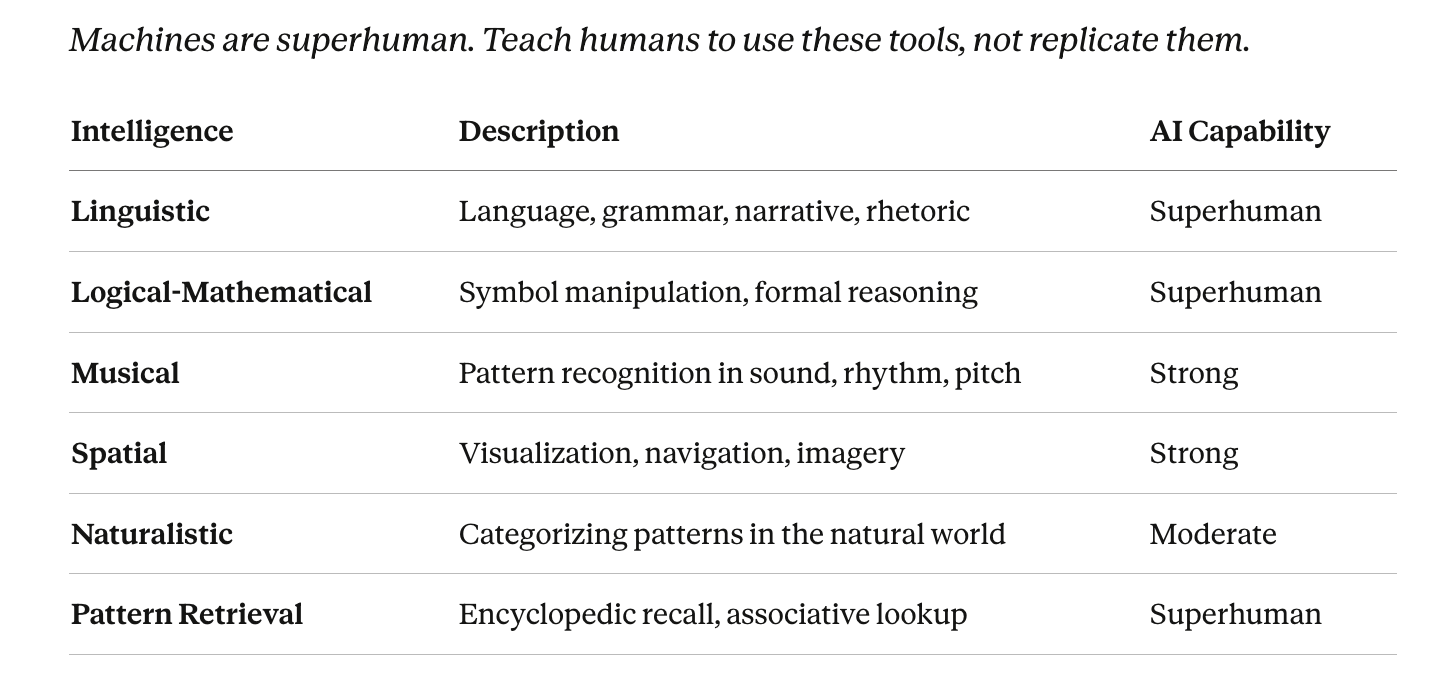

Machines are superhuman at arithmetic — not faster-than-average, but faster than any human who has ever lived, by orders of magnitude, without fatigue, without error. They are superhuman at fact retrieval from large corpora. They are superhuman at syntactic correctness in multiple programming and natural languages. They are increasingly capable at pattern recognition across domains where the training data is dense and the success criteria are well-defined.

None of this should frighten an educator. All of it should reorganize one.

The intelligent response to a forklift is to learn to operate it, to maintain it, to understand what it can and cannot lift, and — most importantly — to develop the judgment to know what needs lifting in the first place. The forklift does not make the human obsolete. It makes the human who cannot operate a forklift obsolete, while making the human who can operate one dramatically more capable.

We are in the early years of the most powerful cognitive forklifts ever built. The curriculum is still teaching students to lift with their backs.

A Working Taxonomy of Intelligences

Howard Gardner gave us seven — later nine — multiple intelligences and cracked something open: the insistence that intelligence is not one thing, that the child who cannot sit still for arithmetic but builds extraordinary things with her hands is not less intelligent, only differently so. That was the right argument for its moment.

The moment has changed. Gardner’s framework was built before machines became capable. It did not need to ask which intelligences were endangered by technology, because technology — in 1983 — was not yet a serious competitor to any of them.

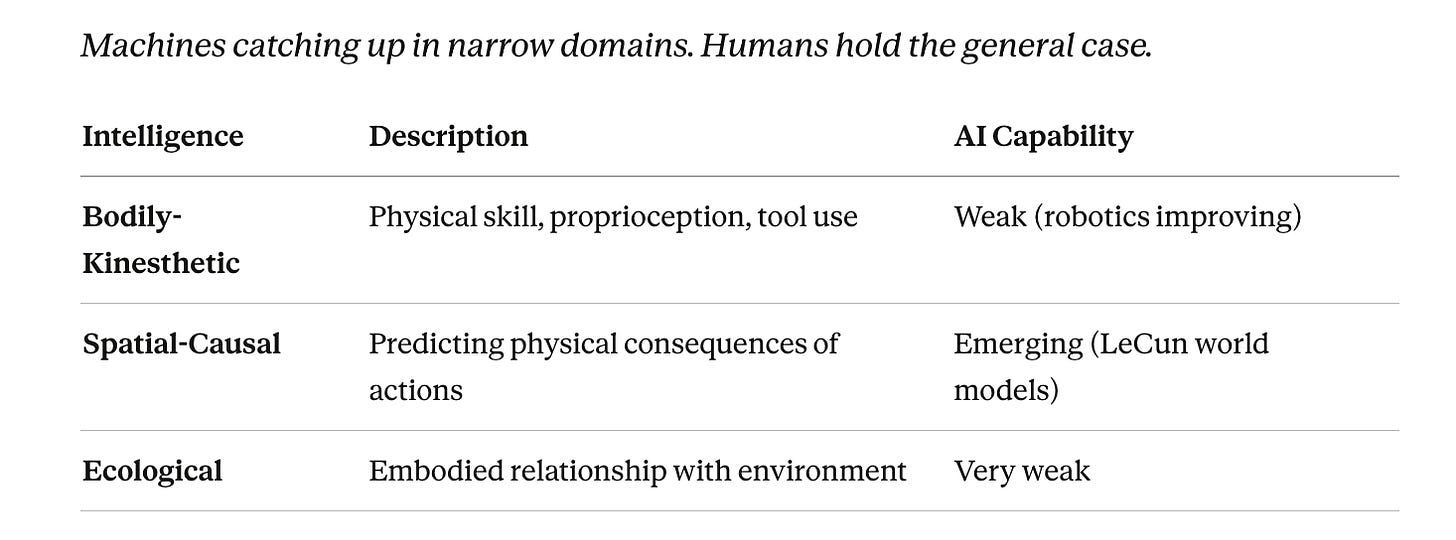

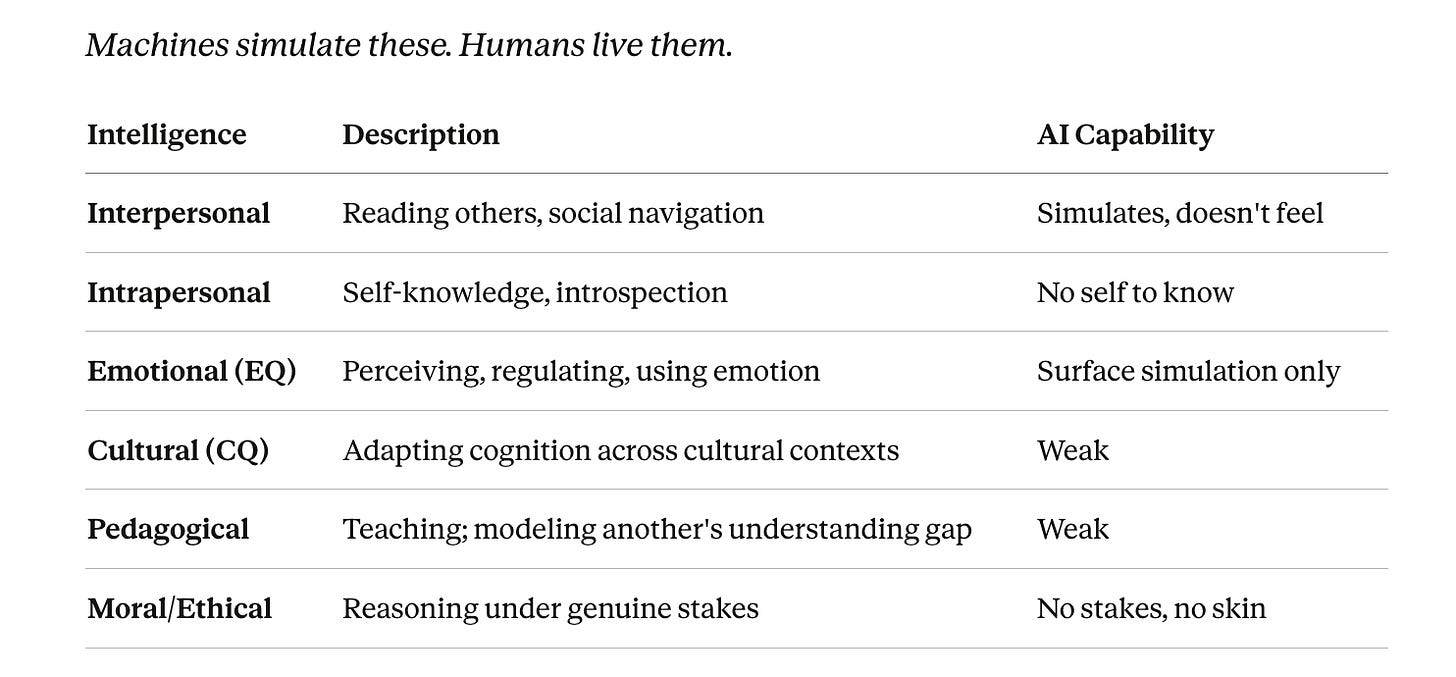

Read the tiers below not as an academic classification but as a triage. Where machines are strongest, training humans to compete directly is now malpractice. Where machines are weakest — Tiers 4, 5, and 7 — is where education needs to rebuild from scratch.

Tier 1 — Pattern & Association

Tier 2 — Embodied & Sensorimotor

Tier 3 — Social & Personal

Tier 4 — Metacognitive & Supervisory

Tier 5 — Causal & Counterfactual

Tier 6 — Collective & Distributed

Tier 7 — Existential & Wisdom

What the Taxonomy Is Saying

Pattern matching is genuine intelligence. It is just not sufficient intelligence. The catastrophic educational mistake is training humans to compete in Tier 1 at superhuman machine speed, while Tiers 4, 5, and 7 go almost completely unscaffolded.

Look at what a typical school day optimizes for: fact retrieval, arithmetic accuracy, syntactic correctness, pattern recognition in standardized formats. All of that is Tier 1. All of that is where machines are strongest. The curriculum was built — before machines existed — to develop exactly the intelligences that machines now render redundant.

And look at what goes untaught: plausibility auditing, problem formulation, causal reasoning, interpretive judgment, wisdom. These are not soft skills. They are the specific cognitive capacities that allow a person to use a powerful tool rather than be used by it. They are what separates an AI-amplified human from an AI-confused one. And they are not on the test.

Tier 6 deserves its own moment. Collective intelligence is not a property of any individual — it is emergent, arising from systems of people in relationship. The entire multiple intelligences framework, Gardner’s and everyone else’s, is a framework of individuals. That framework misses the thing that makes science work, that makes markets sometimes aggregate information correctly, that makes democracy more than the sum of its voters. An education that produces excellent individual reasoners who cannot function in collaborative epistemic systems has not finished the job.

One more thing worth pulling out of the footnote where it’s been hiding: LLMs may be a lossy compression of collective human intelligence — not alien intelligence but our own reflected back. That makes them impressive and limited for exactly the same reason. The machine was trained on what we wrote, what we argued, what we got right and wrong over centuries. It reflects our pattern-making back at us with extraordinary fidelity. What it cannot reflect is the thing that happened between us — the collaborative friction, the disagreement that refined an idea, the trust that made knowledge transmissible. That is Tier 6. That is what no amount of training data captures.

What Theorist.ai Is Trying to Build

The project is early. The arguments are clearer than the curriculum they imply. That is the honest position, and the honesty is the credibility.

What exists right now: this taxonomy, a framework for identifying the forms of human intelligence that machines have not reached, and a commitment to building in public — publishing the failures alongside the frameworks, because the alternative is a black box, and black boxes are exactly what the project is arguing against.

The one-sentence version: stop teaching people to be slower calculators. Start teaching them to be better question askers.

That is not a small change to the curriculum. It is a reorientation of what school is for. The machines are not the problem. The curriculum that didn’t notice the machines is the problem. And the curriculum, unlike the machines, is something we can change.

If this taxonomy landed — or if you think it’s wrong — say so below. Drop the intelligence type you’d add, the tier you’d reorder, or the educational implication you think I’ve missed. The next version of this framework gets built in the comments. Subscribe to Theorist.ai to follow the argument as it develops.

This is version one. Push back — the taxonomy gets better with more minds on it.

Tags: theorist.ai multiple intelligences taxonomy AI era, human intelligence tiers beyond Gardner, plausibility auditing causal reasoning education, metacognitive supervisory intelligence curriculum, LLM lossy compression collective human intelligence