Obsolescence vs. Transformation: Programming Jobs Are Not "Disappearing" in 12 Months

What Anthropic's CEO Actually Meant

What the CEO Actually Meant

On February 5, 2026, Elon Musk amplified a tweet to his 50+ million followers: “Anthropic CEO: Software engineering will be completely obsolete in 6-12 months.”

The tweet went viral. Panic spread through Reddit, Hacker News, and LinkedIn. Computer science students questioned their major choices. Bootcamp graduates felt betrayed. Mid-career engineers began doom-scrolling. The narrative was clear, catastrophic, and—critically—wrong.

Here’s what Anthropic CEO Dario Amodei actually said at Davos: “We might be 6-12 months away from models doing all of what software engineers do end-to-end.” Yahoo FinanceWindows Central

Notice the careful phrasing: “models can do the tasks” is not the same as “the profession is obsolete.” The difference between capability and obsolescence is the difference between a calculator existing and mathematicians disappearing. One did not cause the other.

The Logical Fallacy at Scale

The viral claim commits a fundamental category error: confusing task automation with role elimination. It’s the same fallacy that predicted accountants would vanish when Excel arrived, or radiologists would disappear when computer vision emerged. Neither happened. Both professions transformed.

The data from February 2026 tells a different story than the panic merchants would have you believe.

What’s Actually Happening: The Verification Inversion

The profession isn’t disappearing—it’s undergoing what researchers now call the “Verification Inversion.” This is the empirically observable phenomenon where the bottleneck in software delivery has shifted from code generation to code verification, validation, and integration.

Consider the numbers:

Individual Productivity:

Developers complete 21% more tasks

Pull requests surge by 98%

Code generation time compressed by 60-80%

Organizational Reality:

Code review time increases 91%

Pull request size increases 154%

Bug rates increase 9%

Deployment errors from AI code: 59%

This is not obsolescence. This is role transformation.

The METR Randomized Controlled Trial from July 2025 provides the smoking gun. When experienced open-source developers used AI tools in controlled settings:

Expected speedup: 24% faster

Actual result: 19.4% slower

Why: The “almost-right” problem—code that looks correct but contains subtle flaws requiring more cognitive effort to debug than writing from scratch

If AI truly made engineers obsolete, these numbers would show 10x productivity gains, not a productivity decline during the verification phase.

The Economic Signal: Stratification, Not Elimination

Labor markets are excellent lie detectors. If software engineering were becoming obsolete, we’d see:

Collapsing job postings (we don’t)

Falling wages across all levels (we don’t)

Mass exodus from CS programs (we don’t)

Companies eliminating engineering departments (they aren’t)

What we actually see is stratification:

This is not obsolescence. This is value migration.

The roles being compressed are precisely those focused on routine implementation—the mechanical translation of requirements into syntax. Junior engineers performing CRUD operations, basic API integrations, and standard database queries are finding fewer opportunities. But this isn’t because “engineers are obsolete”—it’s because that specific work is being commoditized, just as assembly language programming was commoditized by C, and manual memory management was commoditized by garbage collection.

Meanwhile, roles requiring judgment, architecture, and cross-functional integration are booming:

High-Growth Specialized Roles (2026):

Platform Engineers: $182K-$251K (orchestrating AI agents safely)

Cybersecurity Architects: $143K-$400K (defending against AI-amplified attacks)

Site Reliability Engineers: $130K-$210K (maintaining systems with AI-generated instability)

AI/ML Engineers: $134K-$193K (12% premium over generalists)

If the profession were disappearing, senior salaries would be falling, not hitting record highs.

The Differential Reality: Not All Roles Transform Equally

The viral narrative treats “software engineering” as a monolith. It isn’t. The transformation varies dramatically by role type, industry, and organizational context.

Role-Specific Transformation Rates (Estimated):

A frontend engineer at a consumer startup building with React and standard component libraries? Their work has transformed dramatically—maybe 70-80% is now prompt engineering, component selection, and integration verification.

A research engineer building novel algorithms for computational biology? Still writing 70%+ of their code manually, because AI can’t invent what doesn’t exist in its training data.

Industry Variation Is Even More Extreme:

High-Transformation Industries:

Consumer social media: 40-60% role consolidation

E-commerce platforms: Fast iteration, commodity stacks

Marketing tech: Heavy AI adoption for routine features

Low-Transformation Industries:

Medical device software (FDA-regulated): 10-20% adoption

Aviation systems (FAA certification): Minimal AI-generated code

Financial trading platforms (SEC compliance): High verification burden prohibits AI speed

Defense systems (security clearance): Manual review requirements

The CEO of a hedge fund trading system company isn’t worried about AI replacing their engineers. The regulatory and verification overhead makes AI-generated code more expensive than human-written code in their context.

The Trust Collapse and Accountability Gap

Trust in AI code accuracy fell from 43% in 2024 to 33% in 2025—not because the models got worse, but because the user base expanded. Early adopters were power users who understood the tools’ limitations. Mass adoption brought naive users who assumed correctness.

Now organizations face what researchers call the “Accountability Gap”:

84% of developers use or plan to use AI tools

Only 23% of IT leaders feel confident managing AI governance

92% report AI increases the “blast radius” of bad code

Here’s the crucial insight: Code generation has been automated. Accountability has not.

When an AI-generated authentication bypass causes a data breach, someone must own that failure. When an AI-generated algorithm discriminates against protected classes, someone must face the regulatory consequences. When an AI-generated deployment script takes down production at 2 AM, someone must be on-call.

That someone is not the AI. That someone is the engineer.

This is why Dario Amodei himself hedged his prediction: “I think there’s a lot of uncertainty, and it’s easy to see how this could take a few years.” Windows Central He listed the bottlenecks: “chips, manufacturing of chips, and the time for model training.” Windows Central

Even Google DeepMind CEO Demis Hassabis pushed back on full automation: “The full closing of the loop, though, I think, is an unknown. I think it is possible to do, you may need AGI itself to be able to do that in some domains.” Windows Central

What Changed: From Typing to Thinking

The work has changed. Dramatically. But “changed” is not “eliminated.”

In 2023, a typical senior engineer spent:

70% of time writing/debugging code

20% in meetings and planning

10% on architecture and design

In 2026, that same engineer spends:

25% generating/prompting code

35% verifying/reviewing AI output

25% in stakeholder alignment

15% on architecture and system design

The ratio inverted. The nature of the work changed. But the role didn’t disappear—it elevated.

This is exactly what happened to:

Accountants when spreadsheets arrived (shifted from calculation to analysis)

Architects when CAD arrived (shifted from drafting to design)

Radiologists when computer vision arrived (shifted from detection to diagnosis)

Translators when Google Translate arrived (shifted from literal translation to cultural localization)

None of these professions disappeared. All transformed. All shed their most mechanical tasks. All concentrated value in judgment and expertise.

The Education Crisis: Preparing Students for Yesterday’s Jobs

Here’s where the viral panic does contain a kernel of truth: traditional software engineering education is badly misaligned with 2026 market needs.

Most CS programs still teach:

60% syntax, data structures, algorithms (now largely automated)

30% theoretical foundations (valuable but insufficient)

10% judgment, architecture, stakeholder management (now the primary work)

Elite institutions are adapting. MIT reorganized its entire EECS department in 2024 to emphasize “Artificial Intelligence and Decision-Making.” Carnegie Mellon launched an AI Engineering program that teaches “trustworthy systems” with explainability and fairness baked in.

But most programs still train students to be musicians in an era that needs conductors.

The junior engineer pipeline is genuinely disrupted. Employment for developers aged 22-25 declined 20% from its late 2022 peak. Hiring managers report that 70% believe AI can perform tasks typically assigned to interns.

This is a real problem. But it’s a problem of pathway compression, not profession elimination. The ladder to senior roles is getting taller and narrower, not removed entirely.

What the Data Actually Shows

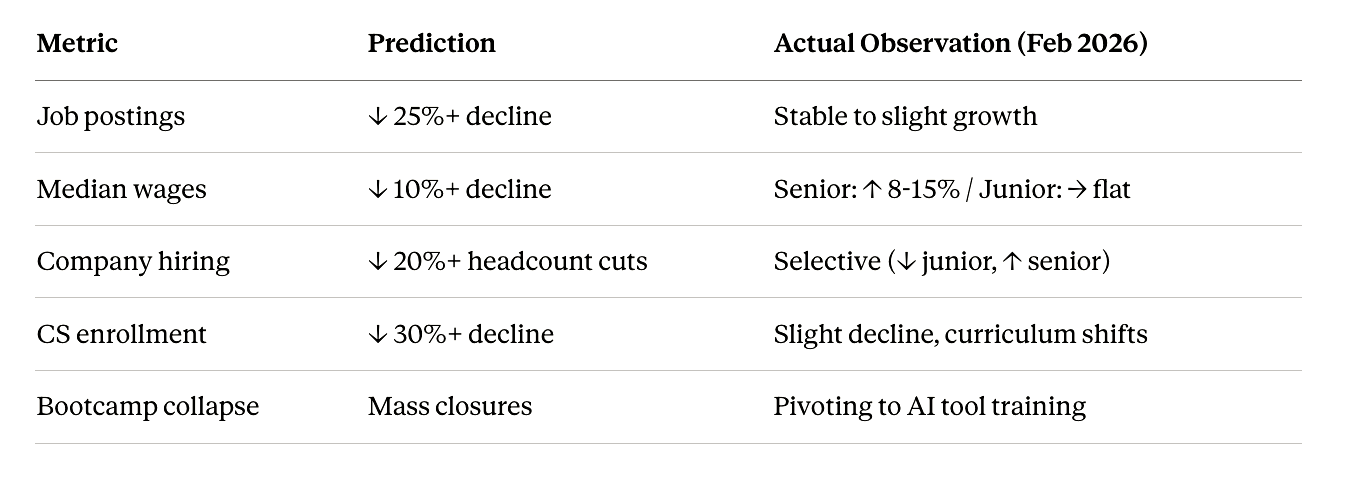

Let’s test the “complete obsolescence” claim against falsifiable predictions:

If software engineering were becoming obsolete by August 2026, we should observe:

MetricPredictionActual Observation (Feb 2026)Job postings↓ 25%+ declineStable to slight growthMedian wages↓ 10%+ declineSenior: ↑ 8-15% / Junior: → flatCompany hiring↓ 20%+ headcount cutsSelective (↓ junior, ↑ senior)CS enrollment↓ 30%+ declineSlight decline, curriculum shiftsBootcamp collapseMass closuresPivoting to AI tool training

The data shows transformation, not elimination.

The DORA Framework: AI as Amplifier

The 2025 State of DevOps (DORA) report provides the most comprehensive analysis: “AI is an amplifier. It magnifies the strengths of high-performing organizations and the dysfunctions of struggling ones.”

DORA identified seven organizational archetypes based on AI adoption success:

Struggling Teams (40% of orgs):

Foundational Challenges: AI intensifies existing dysfunction

Legacy Bottlenecks: Fast code generation, slow deployment

Process-Constrained: AI output sits in approval queues

Thriving Teams (40% of orgs):

Pragmatic Performers: Balance speed and quality, selective AI adoption

Harmonious High Achievers: “AI-native” platforms, agents as first-class citizens

The difference isn’t the AI. It’s the organizational readiness to verify, integrate, and deploy at scale.

The Real Risk: Not Obsolescence, But Consolidation

The honest version of what’s happening:

Entry-level consolidation is real. Fewer junior positions for routine implementation work. This is concerning and requires educational reform.

The verification burden is real. Code review time increases 91%, creating bottlenecks. This requires new tools and processes.

Quality debt is accumulating. Code duplication up 4x, bug rates up 9%. This requires cultural shifts toward “built-in quality.”

Mid-career transitions are harder. The path from junior to senior is steeper. This requires mentorship and intentional skill development.

Senior roles are more valuable than ever. The market pays premiums for judgment, architecture, and accountability. This creates opportunity for those who adapt.

This is not a picture of obsolescence. This is a picture of a profession shedding its commodity layer and concentrating around high-value work.

What Dario Actually Meant

Let’s reconstruct what Dario Amodei was actually saying at Davos, stripped of viral distortion:

The Claim: “Models can technically perform the tasks currently labeled ‘software engineering’ end-to-end.”

The Reality: This is true for routine, well-specified tasks in mature problem domains where verification is straightforward. It’s not true for novel algorithms, ambiguous requirements, cross-functional integration, regulatory compliance, or any work requiring judgment under uncertainty.

The Implication: The mechanical act of translating specifications into syntax—the “typing” part of programming—is being commoditized. The hard parts remain: figuring out what to build, whether it should be built, how it fits into complex systems, and who is responsible when it fails.

The Transformation: Engineers shift from code generators to system architects, verification specialists, and accountability owners. The profession doesn’t disappear—it elevates.

This is exactly what your conductor essay captures: the instruments (AI) can play themselves, but someone must decide what the performance should sound like and own the outcome when the audience boos.

Conclusion: The Profession Isn’t Dying, It’s Evolving

Software engineering in 2026 is undergoing the same transformation that every knowledge profession undergoes when its mechanical tasks get automated:

The commodity layer collapses (routine coding)

Value migrates upward (architecture, judgment, accountability)

The skill premium inverts (knowing syntax → knowing what to build)

The profession stratifies (fewer generalists, more specialists)

Education lags (training for yesterday’s jobs)

But none of this equals “obsolete in 12 months.”

The viral claim is wrong not because AI isn’t powerful, but because it misunderstands what software engineering is. The profession was never primarily about typing. It was always about solving problems under constraints. The constraints include:

Technical feasibility (what can we build?)

Business value (what should we build?)

Risk management (what will break?)

Regulatory compliance (what are we allowed to build?)

Human factors (will people actually use this?)

AI can help with the first constraint. It struggles with all the others.

The engineers who treat AI as a threat are thinking about their jobs wrong. The engineers who treat AI as a tool to amplify their judgment are thinking correctly. And the engineers who recognize that accountability cannot be automated understand why the profession isn’t disappearing—it’s just finally shedding the parts that were always automatable anyway.

Dario Amodei didn’t say software engineering would be obsolete in 6-12 months. He said the mechanics of code generation would be largely automated. Those are different claims.

The viral tweet optimized for engagement, not accuracy. The result is panic where there should be adaptation, fear where there should be focus, and resignation where there should be resilience.

Software engineering isn’t dying. It’s growing up.

And that’s a very different story.

What to do about it:

If you’re a junior engineer: Focus on verification skills, system thinking, and cross-functional communication. Don’t just learn to prompt AI—learn to judge its output.

If you’re a senior engineer: You’re more valuable than ever. Invest in architecture, mentorship, and governance. The market needs you.

If you’re an educator: Rebuild curricula around judgment, not syntax. Teach students to evaluate systems, not just implement algorithms.

If you’re a hiring manager: Stop filtering for “years of experience with X language.” Start filtering for “ability to make sound technical decisions under uncertainty.”

If you’re panicking about the viral tweet: Take a breath. Look at the actual data. The profession is changing. It’s not disappearing.

The baton is still in human hands. The orchestra is just louder now.

The transformation vs obsolescence frame is right. But there's a more uncomfortable question buried in the data: transformation for whom?

Senior engineers with architectural judgment are fine. Entry-level developers who would have built that judgment through routine tasks - they have nowhere to start now. The automation removed the learning path, not the destination.

Labor market data showing senior roles growing doesn't tell you about the decade of mid-career experience you used to need to get there. That gap is the real story.