The $165 Billion Question: What The Economist Got Right (and Terribly Wrong) About Education Technology

A response to "Ed tech is profitable. It is also mostly useless"

Picture this: You’re sitting in a Kansas middle school in 2022, watching Principal Inge Esping make a bet. The district has approved IXL, adaptive math software on the state’s recommended list. It promises “instruction tailored to each student’s level, igniting quick gains.” The laptops are distributed. The software loads. And Esping tells her teachers: “We thought it was going to be really magical.”

Three years later, the laptops are back in the closet. Paper and pencil have returned. The magic never happened.

This scene—real, documented, verified—opens The Economist’s January 2026 investigation “Failing the Screen Test.” The article’s thesis is blunt: educational technology is “mostly useless,” a $165 billion global industry that delivers marginal gains while test scores collapse. Independent research, the magazine claims, shows technology “rarely boosts learning in schools—and often impairs it.”

The article is directionally correct, intellectually dishonest, and dangerously incomplete. Here’s why it matters, and what the full evidence actually reveals.

The Part Where The Economist Is Devastatingly Right

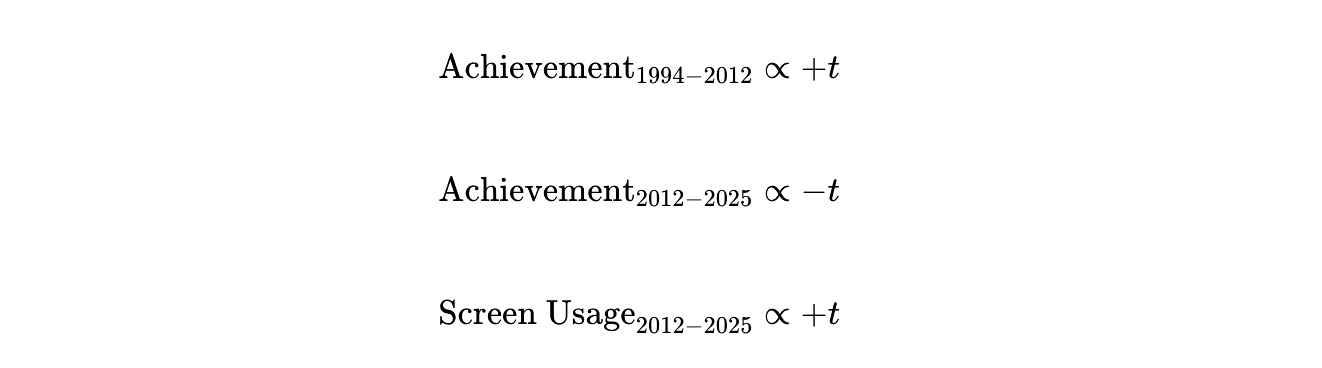

Start with the correlation that should alarm every school board member in America. You can visualize it as a brutal mathematical relationship:

Translation: From 1994 to 2012, student achievement rose steadily across 21 nationwide benchmark tests. The peak arrived precisely when screen usage began its exponential climb—2012 to 2015. Then achievement began falling. Not slowly. Consistently. Year after year.

The Programme for International Student Assessment (PISA) data is even more damning. The OECD found a negative relationship between school computer usage and student achievement in 2012. They confirmed it again in 2015. And again in 2018. The pattern is monotonic: students who use computers most frequently at school score lowest. Students in classes with rare or no computer use score highest.

Consider what this means. You are an 8th grader in 2024. If you report using computers heavily for schoolwork, your reading and math scores will be—on average—lower than a student who rarely touches a device. Not by a little. The difference can equal an entire year of learning.

The Economist presents this data accurately. The timing is too precise to ignore. The international consistency is too strong to dismiss. And there’s a mechanism that makes sense: distraction.

The 14-Point Gap That Equals One Lost Year

Here’s the number that should make superintendents lose sleep: 14 points.

Fourth-graders who used tablets in “all or almost all” classes scored 14 points lower on federal reading tests than students who never used tablets. On the National Assessment of Educational Progress (NAEP) scale, that’s roughly equivalent to one full year of schooling. Lost. Not because of poverty or teacher quality or class size—but correlating directly with device saturation.

The Economist reports this correctly. The article is right that distraction is real. Right that gamification often emphasizes points over learning. Right that digital tools can “weaken human connection and empathy in the classroom.”

The magazine’s financial reporting is also solid. The $165 billion global market? Verified by multiple market research firms. The $30 billion U.S. K-12 spending in 2024? Confirmed by Education Week. The reality that 90% of high school students and 84% of elementary students have school-issued devices? Accurate. That 80% of kindergarteners—five-year-olds—are given personal learning devices? Disturbingly true.

When The Economist writes that “the prevalence of tech in schools owes less to rigorous evidence than aggressive marketing,” they are describing reality. Teachers report being “flooded with daily offers for free tech.” Districts make procurement decisions based on vendor presentations, not randomized controlled trials.

All of this is correct. All of it is concerning. And none of it tells the whole story.

The Stanford Study The Economist Misrepresented

Now we arrive at the intellectual crime that undermines the entire article.

The Economist writes: “A 2024 meta-analysis of 119 studies of early-literacy tech interventions, led by Rebecca Silverman of Stanford University, found the studies described programmes that delivered at best only marginal gains on standardised tests. The majority had little effect, no effect or harmful ones.”

This is not what the Stanford study found. Not even close.

The actual findings from Silverman’s meta-analysis of 119 studies (2010-2023):

Decoding: +0.33 standard deviations

Language comprehension: +0.30 SD

Reading comprehension: +0.23 SD

Writing proficiency: +0.81 SD

Every single literacy domain showed positive effects. Writing showed large effects. Stanford’s own press release stated: “the analysis found positive effects on elementary school students’ reading skills overall, indicating that generally, investing in educational technology to support literacy is warranted“ (emphasis mine).

This is the opposite of “marginal gains with the majority showing little effect, no effect or harmful ones.” This is systematic evidence of benefit that varies by implementation quality and target skill.

The Economist cherry-picked a single sentence from a nuanced 40-page meta-analysis and inverted its conclusion. This is not interpretation. This is misrepresentation.

The Missing Evidence: Where Technology Actually Works

What happens when you look at the studies The Economist ignored?

Intelligent Tutoring Systems: A meta-analysis of 50 controlled evaluations found a median effect size of 0.50 SD. That’s substantial. Kulik and Fletcher (2016) found ITS “substantially outperforms traditional instruction” in mathematics.

AI Tutoring at Harvard: In 2024, researchers conducted an RCT comparing AI tutoring to active learning classrooms. Students using the AI tutor learned significantly more in less time. They reported higher engagement and motivation. This was published in Scientific Reports, peer-reviewed, methodologically sound.

High-Dosage Online Tutoring: The Tutoring Online Program (TOP) increased math performance by 0.20-0.23 SD using volunteer university students. Cost? $300-500 per student. Compare that to hiring a teacher.

Adaptive Learning Platforms: A 2024 meta-analysis examining technology for disadvantaged students found adaptive approaches produced effect sizes of 0.35 SD—substantially higher than non-adaptive tools.

These are not marginal gains. These are meaningful improvements in student learning. Why didn’t The Economist mention them?

Because it doesn’t fit the narrative.

The Cost-Effectiveness Calculation The Economist Never Did

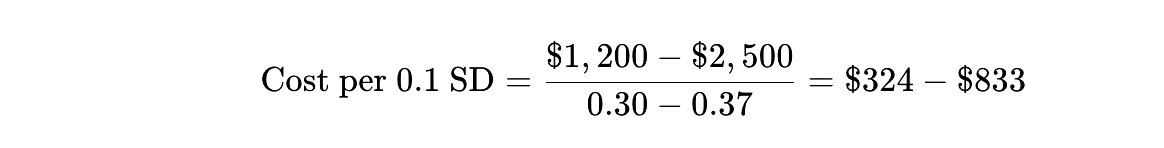

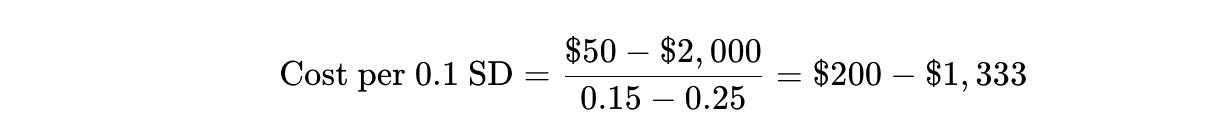

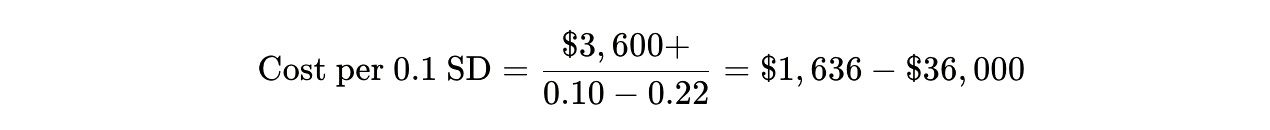

Here’s where narrative journalism intersects with cold mathematics. Let’s calculate the cost per 0.1 standard deviation gain in achievement—the “bang per buck” that actually matters.

Best AI/Intelligent Tutoring Systems:

High-Dosage Human Tutoring:

Generic Ed Tech (typical procurement):

Class Size Reduction (reducing by 7 students):

The story these numbers tell: The problem isn’t educational technology. It’s which educational technology.

The best adaptive systems—intelligent tutors, AI-powered feedback, targeted practice platforms—are the most cost-effective intervention in education. They deliver larger gains than class size reduction at 1% of the cost. They match human tutoring outcomes at 20% of the price.

But here’s the tragedy: these high-quality systems represent maybe 5-10% of current ed tech spending. The other 90% goes to:

Generic devices (Chromebooks, iPads) that enable distraction

Low-quality “drill and kill” software

Platforms optimized for engagement metrics, not learning transfer

Administrative overhead and IT support

The Economist is right that most ed tech spending is wasteful. They’re wrong about why.

The Inverted U-Curve: When More Becomes Less

There’s a mathematical relationship the article hints at but never names. Education researchers call it the dosage curve. Economists call it the Yerkes-Dodson Law. Neuroscientists call it cognitive load theory.

It works like this:

where f is not linear but curvilinear—an inverted U.

At zero technology use, students miss essential digital literacy and efficient practice tools. At moderate, targeted use (30 minutes daily for specific skills), gains are maximized. At high use (multiple hours of screen time), distraction costs exceed learning benefits.

The OECD found exactly this pattern in PISA data. Moderate computer use: slight benefit over zero use. Heavy computer use: worse than no computers at all.

The Economist reports the extremes (high use = bad) but omits the middle (moderate, targeted use = good). This creates a false binary: technology helps or technology hurts. The truth is: technology’s impact depends almost entirely on dosage and implementation quality.

The Implementation Gap: Why McPherson Failed

Return to Principal Esping in Kansas. IXL didn’t fail because adaptive math software doesn’t work. A 2018 randomized controlled trial found IXL produced statistically significant effects around 0.13 SD in one district context. Not transformative, but measurable.

McPherson failed because of implementation collapse:

The Distraction Externality: Once devices are in students’ hands, every app is one click away. YouTube. Spotify. Games. The cognitive cost of resisting temptation exceeds the learning benefit.

The Surveillance Trap: Teachers became IT police, blocking sites and monitoring screens instead of teaching. The administrative burden consumed instructional time.

The Dosage Error: IXL was used for “most in-class independent maths work”—not targeted practice, but wholesale substitution of teacher instruction.

The Training Vacuum: No evidence suggests teachers received deep implementation training or ongoing support to use IXL’s diagnostic features effectively.

The Economist treats this as a technology failure. It’s actually an implementation failure—the predictable result of giving devices without guardrails, flooding teachers with tools without training, and expecting software to replace pedagogy.

Interestingly, the article mentions Esping also banned smartphones, and test scores rose 5% in reading and math while suspensions dropped 70%. But The Economist doesn’t connect these dots: maybe the problem isn’t educational software. Maybe it’s recreational devices masquerading as educational tools.

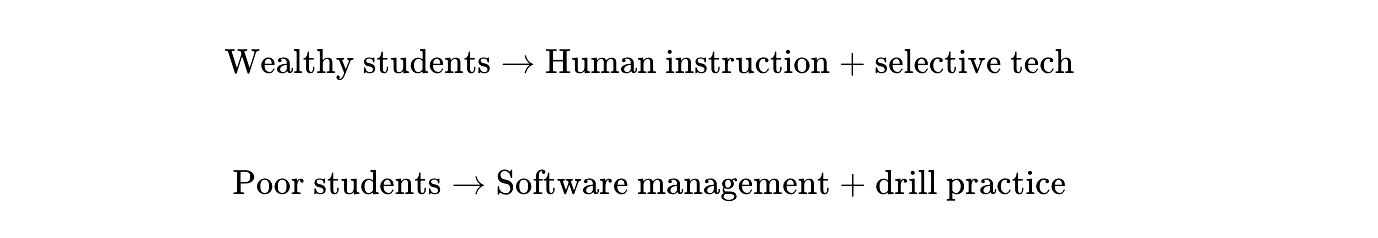

The Equity Paradox: Who Benefits, Who Loses

Here’s the finding that should most disturb policymakers: technology doesn’t impact all students equally.

The Silverman meta-analysis found that socioeconomic status moderates outcomes. Students from low-SES backgrounds showed larger effects from decoding-focused software. This makes sense: structured, repetitive digital practice can substitute for literacy support they don’t get at home.

But—and this is the cruel twist—high-poverty schools use computers more frequently and for low-level tasks. Wealthier schools use technology more sparingly, for creative projects and research.

The result? An algorithmic tracking system emerges:

Technology isn’t closing the achievement gap. In many implementations, it’s automating inequality.

The Economist completely misses this. The article presents technology as uniformly ineffective, when the evidence shows it can be highly effective for disadvantaged students—if used correctly. The problem is systemic misuse, not inherent technological limitation.

What The Economist Should Have Written

The honest headline would be: “We’re Spending $87 Billion on the Wrong Ed Tech.”

The nuanced story:

Generic device saturation: Wasteful and harmful. Creates distraction, displaces better instruction.

Intelligent tutoring systems: Cost-effective and proven. Underutilized.

Adaptive practice platforms: Work well for basic skills (math facts, decoding). Should be targeted, not universal.

Implementation quality: The dominant variable. Same software can produce +0.35 SD or 0.00 SD depending on teacher training and usage protocols.

The correct policy implication isn’t “abandon technology.” It’s “abandon the current procurement model.”

Imagine reallocating current ed tech spending:

Current allocation (approximate):

60-70%: Hardware and generic devices

20-30%: Low-quality software licenses

5-10%: High-quality adaptive/intelligent systems

5-10%: Training and support

Optimal allocation:

20%: Targeted devices (special needs, low-income students)

20%: High-quality adaptive systems (proven ITS platforms)

30%: High-dosage tutoring (human + AI hybrid)

30%: Teacher training and salary increases

Effect? Better outcomes at lower cost. You could fund high-dosage tutoring for 10-15 million students with the money currently wasted on unused Chromebooks and engagement-optimized apps.

The Bill Gates Prophecy

The Economist ends with Bill Gates’ 2013 remark: “It would take a decade to know whether education technology really worked.”

Twelve years later, here’s what we know:

Technology works when it’s:

Adaptive and intelligent

Targeted at constrained skills (math facts, decoding)

Integrated with teacher instruction

Limited in duration (30-60 minutes daily)

Monitored for distraction

Technology fails when it’s:

Generic and substitutive

Used for complex comprehension tasks

Implemented without training

Available for unlimited duration

Unmonitored for misuse

The evidence is not ambiguous. We know what works. The problem is procurement practices, vendor incentives, and implementation infrastructure—not the technology itself.

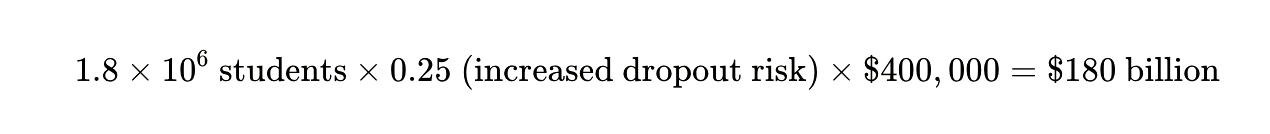

The True Cost of Getting It Wrong

Return to those fourth-graders who lost a year of learning—14 points on federal reading tests because of heavy tablet use. Multiply that by the 3.6 million fourth-graders in U.S. public schools. Assume even half show similar losses.

You’re looking at 1.8 million students who may not recover that lost ground. Research shows that students who aren’t proficient readers by third grade are four times more likely to drop out. The lifetime earnings difference between a high school graduate and dropout? Approximately $400,000.

The cost of getting ed tech wrong:

That’s just one cohort. One year. One grade level.

The Economist is right to sound the alarm. They’re wrong about why it’s ringing.

Conclusion: The Question We Should Be Asking

The article ends with parent advocate Emily Cherkin imagining: “What if all that money had gone into teachers instead?”

It’s the wrong question. The right question is: “What if we had spent that money on proven interventions and stopped pretending every student needs a device?”

The evidence map is complete. The cost-effectiveness ratios are calculated. We know intelligent tutoring systems work. We know adaptive platforms work for basic skills. We know high-dosage tutoring works. We know teacher training works.

We also know device saturation without implementation support doesn’t work. We know gamified engagement apps don’t transfer to real learning. We know that substituting screens for human interaction harms young children.

The tragedy isn’t that we tried technology and it failed. The tragedy is that we’re still installing the wrong technology, in the wrong amounts, in the wrong ways, while the right approaches—proven, cost-effective, scalable—languish at 5% market share.

You’re a school board member. It’s budget season. A vendor pitches you on 1:1 Chromebooks for every kindergartener. The cost: $500,000 for devices, $200,000 annually for support.

The alternative: $700,000 buys you an intelligent tutoring system for math, training for every teacher, and enough left over to hire two literacy interventionists.

The Economist wants you to choose neither—to conclude technology is useless and return to 1995.

The evidence says: choose the second option. Because the equation is simpler than it appears:

We’re twelve years past Bill Gates’ deadline. The answer is clear. It’s time to stop debating whether technology works and start implementing what we know actually does.

The screens aren’t failing the test. We are.

Great rebuttal article! The article raises an important question about the $165B invested in EdTech and whether it is translating into learning outcomes. That scrutiny is healthy for the field.

One issue I’ve been writing about in my Impact Reality blog series is what I call Evidence Debt. Many products are validated at a single point in time, but the product, implementation, and classroom context evolve quickly. When the evidence doesn’t evolve with them, a gap forms between what the research once proved and what is actually happening in classrooms today.

Another challenge is that the debate often treats “EdTech” as one category, when outcomes vary widely depending on the instructional use case. The real question isn’t whether technology works, but under what instructional conditions it improves learning.

That’s why the next phase of impact measurement needs to move beyond traditional ROI toward what I’ve called ROI², Return on Instruction. The critical signal isn’t just whether a tool was purchased or adopted, but whether it changes the instructional routines that drive learning: feedback cycles, formative assessment, and targeted practice.

The future of EdTech accountability will depend less on one-time validation studies and more on ongoing evidence tied to real classroom implementation. In a field evolving this quickly, static evidence quickly becomes outdated. Continuous evidence will be the standard the sector ultimately moves toward.

My modest offer to the discussion is The Bilateral Core Constraint Model of Institutional EdTech. https://www.linkedin.com/pulse/postmodern-era-edtech-united-states-ian-mccullough-bwvwc/#:~:text=what%20it%20is.-,Seeing%20the%20Bigger%20Picture,-Zoom%20out.%20Look