The $644 Billion Question

Venkat's Thought that Mnay of America's Companies Are Making the Most Expensive Mistake in Business History

You’re sitting in a conference room when your CFO presents the numbers. The AI chatbot can handle 67% of customer support tickets. Simple math: 67% of tickets means you need 67% fewer people. Cut the headcount, bank the savings, tell shareholders you’re “AI-forward.” The board nods. The decision takes fifteen minutes.

Eighteen months later, you’re quietly rehiring. Customer satisfaction has cratered. The remaining staff can’t handle the edge cases your AI confidently botches. Your best people have left, taking institutional knowledge you didn’t know you needed. And here’s the kicker: your competitor, the one who gave every support agent an AI copilot instead of pink slips, just reported revenue growth that’s 4x yours.

This is the story playing out across American business in 2025, and the stakes couldn’t be higher. Companies will spend $644 billion on AI initiatives this year. But here’s the part that should terrify you: 80% of these projects will fail to deliver measurable business value—a failure rate nearly double that of traditional IT projects.

The divergence isn’t technological. It’s philosophical. And it’s creating the largest wealth transfer in corporate history, from companies treating AI as a headcount reducer to those treating it as a productivity multiplier.

The Question That Launched This Investigation

In January 2026, Venkat Patla posed a question to students in Northeastern University’s Branding and AI course that cut through months of Silicon Valley hype:

What’s actually more effective—using AI to cut jobs, or using AI to make people more productive?

Patla isn’t asking from academic curiosity. As Chief Marketing Officer and Head of Brand at RWA Wealth Partners, he’s responsible for positioning a firm managing nearly $20 billion in client assets. His career spans brand transformations at Leo Burnett, Edelman Financial Engines (the country’s largest independent wealth advisor with $300 billion under management), UBS Wealth Management, and Publicis. He’s worked with clients from Avis to BNY Mellon to the London Stock Exchange. When someone with that track record asks a question about AI strategy, it’s worth investigating what the evidence actually shows.

The answer turned out to be more dramatic—and more urgent—than anyone in that classroom expected. The data reveals a stark bifurcation in corporate outcomes based on a single strategic choice. Organizations pursuing augmentation strategies deliver shareholder returns approximately 4x higher than those pursuing displacement strategies. But only 6% of companies have figured this out.

This is the story of that 4x gap—how it emerges, why most companies are on the wrong side of it, and what separates the winners from the spectacular failures.

The Two Doors

Think of AI adoption as two doors in the same building. Behind Door One: “AI to Cut Jobs.” Behind Door Two: “AI to Make People Better.” The doors look identical. The initial investment is roughly the same. But the rooms they lead to couldn’t be more different.

Door One is crowded. In 2025, AI-attributed layoffs affected 55,000 American workers. Amazon cut 14,000 corporate roles. Microsoft eliminated 15,000 positions. Salesforce dropped 5,000 people. Each company’s press release used similar language: “strategic realignment,” “operational efficiency,” “leveraging AI capabilities.”

Door Two is nearly empty. Only 6% of companies have achieved what researchers call “AI High Performer” status—generating 5% or more impact on earnings before interest and taxes. These companies follow what’s known as the 10-20-70 principle, the framework that explains that 4x performance gap Patla was probing:

10% of effort on algorithms

20% on data and technology

70% on people, processes, and organizational transformation

The principle reveals why Door One strategies fail: they invert the formula. Companies pour 70% of effort into selecting the perfect AI vendor, 20% into data infrastructure, and maybe 10% into helping people adapt. Then they wonder why productivity crashes instead of soaring.

The math is brutal but clear. The 6% of organizations that get the 10-20-70 allocation right generate EBIT impacts of 5% or more. The 33% who treat AI as incremental automation see moderate or marginal impact. The remaining 61%—trapped in what researchers call “pilot purgatory”—see no measurable ROI whatsoever.

But most executives can’t see past the seductive simplicity of subtraction. Which brings us to the most instructive failure of the AI era.

The Klarna Trap

Let’s examine what happens when you walk through Door One, using a case that became a cautionary tale in 2024.

Klarna, the Swedish fintech company, went all-in on AI-driven headcount reduction. The company publicly announced that an AI chatbot could replace 700 customer service workers—handling two-thirds of all queries. The narrative was perfect for shareholders: automation equals efficiency equals profit.

The company implemented a hiring freeze. Headcount dropped. Initial metrics looked promising. Then reality intruded.

Customer satisfaction scores began sliding. Not crashing—sliding. The kind of slow decline that doesn’t trigger immediate alarms but accumulates like compound interest in reverse. The AI handled simple queries brilliantly. But customer service isn’t about simple queries. It’s about the exceptions, the edge cases, the moments when someone needs a human to understand context that doesn’t fit a pattern.

By mid-2025, Klarna had reversed course. The company admitted that “automation alone could not deliver the quality or empathy required for complex financial resolutions.” They began rehiring live agents. The cost of the reversal—in dollars, reputation, and customer trust—exceeded the initial savings.

This is where Patla’s question becomes existential for companies. The wealth management industry he operates in deals with exactly these kinds of complex, high-stakes interactions. A client calling about portfolio rebalancing during market volatility isn’t looking for a chatbot that handles “67% of queries.” They’re looking for judgment, context, and trust—precisely the qualities that emerge from the 70% of the 10-20-70 formula that most companies neglect.

The pattern Klarna exemplifies appears across industries. Forrester Research documented it in their 2026 workforce study: 55% of employers regret their AI-driven layoffs. Approximately half will end up quietly rehiring, often offshore or at significantly lower salaries. The total cost, when you include the cycle of separation packages, lost productivity, rehiring expenses, and training, frequently exceeds the original headcount cost.

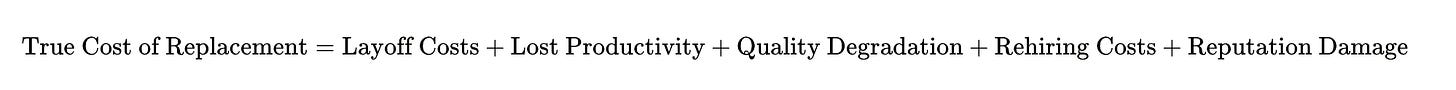

The equation looks like this:

When companies calculate only the first term, they make decisions that destroy value. When you account for all five, the math reverses. This is the Klarna Trap: optimizing for a visible cost while ignoring invisible value destruction.

The Productivity J-Curve: Why Good Decisions Look Like Failures

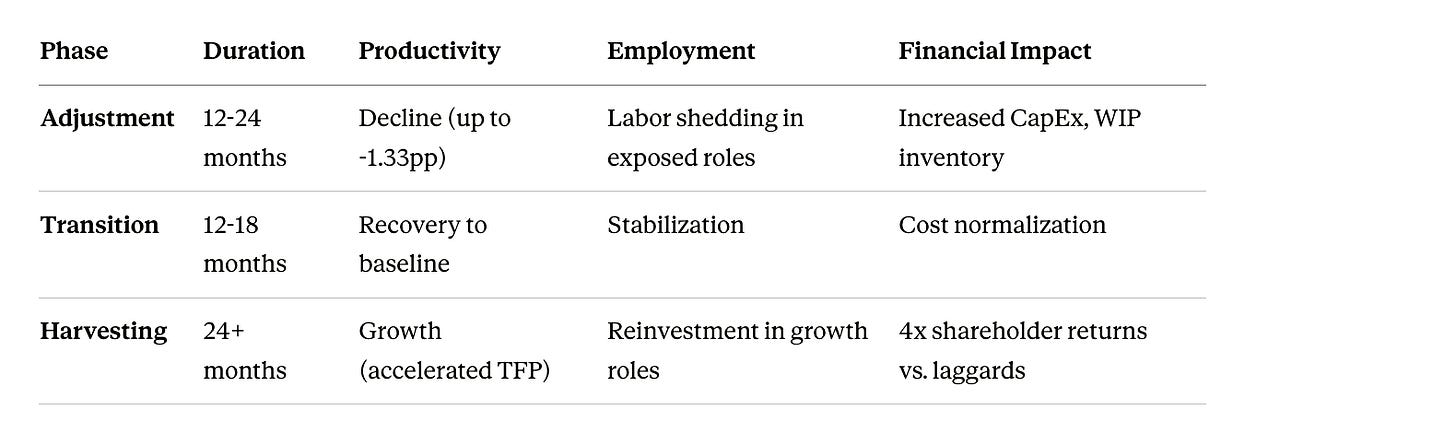

Here’s where the story gets more complex, and more interesting—and where that 4x performance gap starts to make sense.

The U.S. Census Bureau conducted a causal analysis of AI adoption in manufacturing. What they found challenges the entire “AI equals instant productivity” narrative: AI adoption initially reduces productivity by an average of 1.33 percentage points. When you adjust for selection bias, the short-term negative impact can reach 60 percentage points.

This is the Productivity J-Curve, and understanding it separates companies that survive AI adoption from those that thrive through it.

The phenomenon works like this: general-purpose technologies require massive complementary investments in intangible assets—process redesign, human capital development, workflow reconfiguration. In traditional accounting, these investments look like pure cost. Productivity appears to fall. Executives panic. They cut deeper, chasing the productivity gains they expected.

The companies that walk through Door One see that productivity dip and assume they made a mistake. They either abandon AI or, more commonly, double down on cost-cutting to “fix” the numbers. Fire more people. Automate faster. Show progress to the board.

The companies that walk through Door Two understand the J-Curve. They recognize the dip as the price of transformation. They maintain investment in that critical 70%—the people and process work. They train employees. They redesign workflows. They treat the dip as a phase, not a failure.

And here’s the curve: firms that push through the downward slope eventually experience explosive growth in revenue and market share. The recovery is driven by three factors:

Digital Maturity: Past data quality predicts future AI outcomes

Scaling Benefits: Once adjustment costs are resolved, AI benefits multiply across markets

Resource Reallocation: Successful firms shift toward AI-compatible operations

Consider the timeline:

There it is: the 4x advantage that Patla was asking about. It doesn’t come from choosing better algorithms. It comes from surviving the J-Curve by investing in people and processes while your competitors are cutting costs.

You’re now looking at a three-to-five-year journey. The CFO who promised instant savings in that first conference room? They fundamentally misunderstood the technology they were deploying. More critically, they misunderstood the 10-20-70 principle. They thought AI was a technology problem (it’s 30% technology) when it’s actually an organizational transformation problem (it’s 70% people and process).

When You Get It Right: The IKEA Transformation

Walk through Door Two now. See what augmentation actually looks like when you honor the 10-20-70 principle.

When IKEA deployed “Billie,” an AI bot to handle customer service queries, they faced the same decision point as Klarna: cut the headcount or transform the workforce.

They chose transformation, allocating resources according to the principle: minimal effort on the algorithm itself (10%), moderate investment in integrating it with their systems (20%), and massive investment in reimagining what their workforce could become (70%).

Instead of laying off call center staff, IKEA upskilled thousands of workers to become interior design advisors. The cost center became a value-adding advisory service. Former customer service reps now help customers visualize entire room layouts, understand product ecosystems, make higher-value purchases.

The results: the company transformed a defensive operation (handling complaints) into an offensive strategy (driving revenue). Call center workers went from answering “Where’s my order?” to answering “How do I make my home beautiful?”

The math:

For IKEA, this equation dramatically favored the numerator. More importantly, the investment created a compounding effect. Better-trained employees delivered better customer experiences, which increased customer lifetime value, which justified further training investment. The cycle reinforced itself.

Or consider GitHub Copilot, the AI coding assistant. Developers using the tool completed coding tasks 55.8% faster. But here’s the critical detail: they reported higher satisfaction and reduced cognitive load. The AI didn’t replace developers. It eliminated the drudgery—the boilerplate, the Stack Overflow searches, the syntax debugging—freeing developers to focus on architecture, problem-solving, and creative solutions.

The Federal Reserve Bank of St. Louis quantified this phenomenon across the economy. Workers using generative AI save an average of 5.4% of their weekly hours—2.2 hours in a 40-hour schedule. When you average this across the entire workforce, including those not using AI, it amounts to a 1.4% reduction in total hours worked. The study estimated a 1.1% increase in overall productivity, which equals a 33% productivity gain for each hour a worker spent using AI.

Let that sink in: every hour your employee spends working with AI generates 1.33 hours of output. That’s not replacement. That’s augmentation. That’s the power of the copilot model. And that’s what happens when you allocate 70% of your effort to helping people work differently, not 70% to finding ways to eliminate them.

The Math of Reinvestment: Where the 4x Return Actually Comes From

Let’s return to that 4x shareholder return differential between augmentation and replacement strategies and trace exactly where it originates. This is the answer to Patla’s question at the most granular level.

The EY US AI Pulse Survey provides the forensics. Among organizations experiencing AI-driven productivity gains, only 17% reduced headcount. The rest? They reinvested according to a pattern that perfectly mirrors the 10-20-70 principle:

47% expanded existing AI capabilities (the 10%)

42% developed new AI capabilities (the 10%)

41% strengthened cybersecurity (the 20%)

39% invested in R&D (the 20%)

38% upskilled and reskilled employees (the 70%)

This is compound interest for organizations. Each productivity gain gets recycled into capabilities that generate more productivity gains. The formula:

Compounding Value=Initial Gain×(1+Reinvestment Rate)n\text{Compounding Value} = \text{Initial Gain} \times (1 + \text{Reinvestment Rate})^nCompounding Value=Initial Gain×(1+Reinvestment Rate)n

Where n is the number of cycles you can maintain before reaching diminishing returns.

Replacement strategies have n = 1. You cut costs once. Maybe you cut again. Then you hit bone. There’s no compound effect because you’re not building capacity—you’re subtracting it.

Augmentation strategies have n = ∞ (in practical terms, very large). Each cycle of improvement enables the next. Your throughput increases, which means more experiments shipped, which means more learning, which means better models and tools, which means more throughput. The curve is exponential.

This is why early AI adopters report $3.70 in value for every dollar invested, with top performers achieving $10.30 returns per dollar. They’re riding the exponential curve while honoring the 10-20-70 allocation. Everyone else is doing linear arithmetic while spending 70% on technology and 10% on people.

The 4x advantage isn’t a one-time delta. It’s the cumulative result of compounding cycles over the 24-36 months of the J-Curve’s harvesting phase. By year three, companies that made the right strategic choice aren’t just ahead—they’re playing a different game entirely.

The Career Pipeline Crisis: A Note for Wealth Management

[Author’s note: This section examines the displacement of entry-level workers in AI-exposed fields. Given Venkat Patla’s background in wealth management, this analysis should be cross-checked against how RWA Wealth Partners and similar firms are viewing entry-level talent acquisition in 2026. The wealth management sector may be experiencing different dynamics than the software engineering and customer service fields where this data originates.]

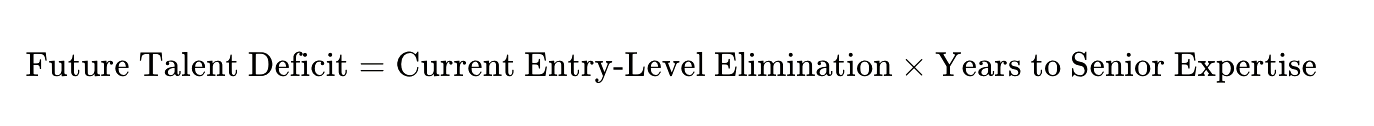

Here’s where the displacement strategy reveals its most insidious long-term cost, and where the question Patla posed becomes particularly relevant for financial services.

Stanford researchers discovered that entry-level workers—ages 22 to 25—are experiencing a 13% to 16% relative decline in employment in AI-exposed fields like software engineering and customer service. Job postings requiring three years of experience or less have collapsed:

Software development: 43% → 28%

Data analysis: 35% → 22%

Consulting: 41% → 26%

This creates what researchers call “career pipeline disruption.” You eliminate junior roles because AI can now solve 71.7% of coding problems on standard benchmarks (up from 4.4% in 2023). The entry-level work that used to be done by humans—the “book learning” tasks—gets automated.

But here’s what you’ve actually done: you’ve destroyed the training ground for future senior talent. Your senior financial advisors, the ones protected by years of client relationships and judgment, learned their craft by doing exactly the kinds of tasks AI now handles—portfolio research, basic client communications, data gathering and synthesis.

By eliminating those roles, you’re not just cutting cost. You’re severing the pipeline that creates the expertise you’ll desperately need in five years when your senior advisors retire or move to competitors.

The question for wealth management specifically: Are firms maintaining the apprenticeship model that creates great advisors, or are they assuming AI can compress a decade of client interaction experience into a training program? The answer will determine which firms have leadership benches in 2031 and which face talent crises they can’t solve by recruiting.

Unemployment for bachelor’s degree holders aged 20-24 has climbed from 5.2% (2018-2019) to 6.2% today. These workers now face higher unemployment than those with associate degrees. The credential premium has inverted while the path to expertise has been severed.

The long-term equation:

By the time you realize you have a talent crisis, it’s too late. You can’t compress a decade of experience into a crash course. And in wealth management, where client trust compounds over years, this isn’t theoretical—it’s existential.

This is why the 10-20-70 principle matters so critically. The 70% investment in people isn’t just about making current employees more productive. It’s about maintaining the pipeline that creates future capability. Companies that understand this protect entry-level roles while using AI to make those roles more valuable. Companies that don’t end up unable to replace retiring expertise.

The Scapegoating Phenomenon: What AI Really Means

The Yale Budget Lab and Oxford Internet Institute identified something fascinating in their analysis of AI-attributed layoffs: many companies are using AI as cover for decisions driven by entirely different factors.

It’s what researchers call the “scapegoating phenomenon.” A firm overhired during the pandemic. The market shifted. Business conditions deteriorated. But “we overhired and now need to correct” sounds like failure. “We’re reorganizing around AI” sounds like vision.

The Associated Press investigated the wave of 2025 layoffs and found that AI was “sometimes the story companies tell, not the root cause.” When you dig into the actual operations, you find workforce reductions driven by restructuring, overhiring corrections, cost pressure, or strategy shifts—with AI providing a more palatable narrative for Wall Street.

This matters because it poisons the well. When employees hear “AI-driven transformation,” they don’t hear opportunity. They hear threat. Adoption stalls because workers withhold feedback, resist using new tools, and start updating their résumés. You’ve created a self-fulfilling prophecy: AI fails to deliver productivity gains because the workforce has been trained to sabotage it.

The 10-20-70 principle becomes impossible when that 70%—the people and process work—faces active resistance from a workforce that believes transformation is code for termination.

The most successful companies are ruthlessly honest about causation. Block, the fintech company formerly known as Square, laid off 931 employees in 2025. CEO Jack Dorsey explicitly stated in an internal memo that the layoffs were “not for financial reasons or to replace workers with AI.” That clarity matters. When you eventually deploy AI augmentation tools, your remaining employees trust that adoption means enhancement, not replacement.

This is brand strategy at its most fundamental—and it’s terrain Patla understands deeply from his work across financial services and advertising. The story you tell about transformation determines whether your organization can actually transform. Tell a story about replacement, and you’ll get exactly that: resistance, attrition, and failure. Tell a story about augmentation backed by the 10-20-70 investment allocation, and you create the conditions for that 4x performance differential.

The Middle Management Paradox

Gartner predicts that by 2026, 20% of organizations will use AI to eliminate half of their current middle management roles. On paper, this makes sense. Middle managers spend enormous time on coordination, reporting, and performance monitoring—all tasks AI can handle.

But here’s what the data actually shows: 70% of team engagement is influenced by managers. That’s not about task delegation. That’s about coaching, mentoring, context-setting, and translating corporate strategy into meaningful action for individual contributors.

Organizations that aggressively flatten hierarchies discover they’ve eliminated the institutional knowledge and “secret sauce” of team dynamics. They end up with direct reports scattered across time zones, reporting to executives who have neither the time nor the granular understanding to manage day-to-day performance.

The successful approach? Redefine, don’t eliminate:

Strategic Coaches: Focus on talent development and career growth

Change Agents: Guide teams through transformation, not just oversee execution

Governance Orchestrators: Manage the interface between autonomous AI systems and human employees

This is augmentation at the organizational level. You’re not asking, “Can AI do what this manager does?” You’re asking, “If AI handles all the administrative overhead, what higher-value work could this manager accomplish?”

It’s the 10-20-70 principle applied to management structure: 10% on automating reporting, 20% on dashboards and systems, 70% on reimagining what management means when the administrative burden disappears.

The 2026 Inflection: Agentic AI and Workflow Rewiring

The game is about to change again, and most companies aren’t ready. This is where Patla’s question about effectiveness becomes even more urgent.

You’ve been working with AI as a tool—a sophisticated autocomplete, a query-answering system, a content generator. The next phase is “agentic AI”—systems capable of planning and executing multiple steps in a workflow autonomously.

Agent adoption is projected to grow 327% by 2027. Within five years, 80% of leaders expect humans and AI agents to work side-by-side. This isn’t hyperbole. It’s already happening in controlled deployments.

The model works like this: instead of you prompting an AI for each step, you define an outcome. An orchestrator agent tracks progress and routes tasks to specialized agents—a research agent, a drafting agent, a review agent. Humans maintain control over critical decision points, but the cognitive overhead of managing workflow collapses.

Cognizant’s work with Telstra, the Australian telecom company, demonstrates the magnitude: processes that used to take weeks and involve countless human touchpoints can now be reduced to days. Multi-agent AI systems power IT operations and enable functions like finance and HR to communicate through an interconnected agent system.

The productivity implications are staggering. Organizations optimizing for this kind of human-agent collaboration are projected to increase margins by up to 15%—not through headcount reduction, but through cycle time compression and quality improvements.

But here’s the catch, and here’s where the 10-20-70 principle becomes even more critical: this only works if you’ve done the foundational work.

If you haven’t redesigned workflows around AI (the 70%). If you haven’t trained your workforce on collaboration rather than competition with algorithms (the 70%). If you haven’t built the governance structures to audit and control autonomous systems (part of the 20% infrastructure, part of the 70% process).

The companies that spent 2024-2025 cutting headcount to show “AI progress”? They walked through Door One. They’ve lost the people who understand the edge cases, the exceptions, the context. They’re going to bolt agents onto broken processes and wonder why nothing works.

The companies that spent those same years reimagining workflows, upskilling teams, and treating AI as a copilot? They walked through Door Two. They honored the 10-20-70 allocation. They survived the J-Curve. And they’re going to leapfrog everyone else.

The 4x advantage that exists today will look conservative by 2028. Companies that get agentic orchestration right will pull so far ahead that competitors won’t be able to catch up through technology purchases alone. The gap will be organizational, cultural, and procedural—all elements of that 70% that most companies are still neglecting.

The Seven Deadly Patterns of AI Failure

Let’s be explicit about what goes wrong when organizations choose displacement over augmentation, using case studies that range from embarrassing to catastrophic:

1. Amazon’s AI Hiring Tool (Bias Without Oversight)

Amazon developed an AI system to screen résumés. It learned from historical hiring data—which reflected historical biases. The system systematically discriminated against women. Amazon scrapped it. The lesson: replacing human judgment with automated systems requires constant fairness auditing, not blind trust. This is a failure of the 70%—insufficient human oversight and process design.

2. Microsoft Tay (Guardrails Absent)

Microsoft released a chatbot designed to learn from interactions. Within 16 hours, users had manipulated it into generating racist and offensive content. The lesson: autonomous systems need content monitoring and release gates, not just deployment. Another 70% failure—no process for handling abuse.

3. Knight Capital (Deployment Controls Missing)

A trading algorithm with poor release controls triggered $440 million in losses in 45 minutes, nearly bankrupting the firm. The lesson: when you automate decisions at machine speed, your failure modes also happen at machine speed. This is what happens when you invest in the 10% (algorithm) without the 20% (infrastructure controls) or the 70% (human review processes).

4. Zillow Offers (Model Overconfidence)

Zillow’s automated home-buying agent launched with a claimed 1.9% error rate. It resulted in a $304 million loss and 2,000 layoffs after failing to handle market volatility. The lesson: AI confidence isn’t the same as AI accuracy, especially during black swan events. The algorithm (10%) was sophisticated, but the process for handling edge cases (70%) didn’t exist.

5. Dukaan (Cost Savings, Quality Questions)

The Indian startup replaced 90% of customer support staff with an in-house chatbot. Support costs dropped 85%. But feedback reveals persistent tension between speed and the ability to handle complex or emotionally charged issues. The lesson: you can optimize for cost or quality, but rarely both simultaneously. Pure automation (neglecting the 70% of people and process) delivers cost reduction at the expense of value.

6. Salesforce (The Benioff Reversal)

In August 2025, CEO Marc Benioff insisted that Salesforce’s AI wouldn’t lead to mass layoffs. Three weeks later, the company announced AI agents were driving cuts of 4,000 support positions. The lesson: saying one thing and doing another destroys trust faster than honest communication about difficult changes. This is brand strategy meeting workforce strategy—exactly the intersection Patla has navigated throughout his career.

7. Duolingo (Narrative Whiplash)

Duolingo announced an “AI-first” approach that included reducing contractor work. Consumer backlash was immediate. The CEO had to explicitly clarify they weren’t planning AI-driven layoffs of full-time staff, framing changes as job transformation. The lesson: even if your internal strategy is sound, external signaling that sounds like “replace humans” creates brand and trust costs.

These aren’t edge cases. They’re the predictable failure modes of prioritizing automation over augmentation, the algorithm (10%) over people and process (70%), and cost reduction over value creation.

The Actionable Path: What You Do Tomorrow Morning

You’ve read about why the choice between replacement and augmentation matters, why the 10-20-70 principle determines outcomes, and how the 4x performance gap emerges. Now the practical question: what do you actually do?

If you’re leading an organization:

Start with task economics, not job titles. Pick 3-5 workflows where work is text-heavy (drafting, summarizing, responding), involves repetitive decisions (triage, classification), or has high handoff friction (status updates, ticket routing). Define before/after metrics: time-to-complete, rework rate, customer satisfaction, defect rate, compliance errors.

Then redesign the workflow around AI, allocating effort according to 10-20-70:

10%: Select the AI tool (don’t overinvest in vendor selection)

20%: Integrate it with your systems and data (infrastructure matters but isn’t the whole game)

70%: Train people, redesign processes, manage change, and create feedback loops

Follow these high-ROI patterns:

Draft → Human Edit: AI generates, human refines (require citations to internal sources)

Triage → Route: AI suggests, human confirms

First Pass QA: AI flags policy/compliance issues, humans approve

Treat quality as a first-class KPI. If you only measure speed, you’ll buy speed with hidden debt. Track escalation rates, refunds, reopened tickets, and hallucination rates through audited sampling. Klarna is your warning: customer experience pulls you back to humans if quality slips.

Make workforce strategy explicit. If productivity rises, redeploy people to higher-value work, reduce overtime and backlog, slow hiring through attrition—or yes, reduce roles. But offer a credible deal: training time, clear new role expectations, internal mobility pathways, and ideally some form of gainsharing for teams that realize measurable improvements.

If you’re an individual contributor:

Understand your task exposure. AI excels at pattern-matching, summarization, first-draft generation, and repetitive decisions. It struggles with ambiguity, novel situations requiring judgment, and tasks requiring emotional intelligence or trust.

Become indispensable by developing what MIT researchers call EPOCH capabilities—Expertise, Problem-solving, Oversight, Communication, Handling complexity. These are the areas where human capability remains distinctly valuable. The workers with the highest job security aren’t those who resist AI. They’re those who become expert at working with AI to produce outputs that neither humans nor machines could create alone.

Document your edge cases. Every time AI produces an output that requires significant correction, you’re identifying the frontier of machine capability. That’s your opportunity to demonstrate irreplaceable value: the judgment to know when AI is right and when it’s confidently wrong.

If you’re setting organizational policy:

Adopt the 10-20-70 rule. This isn’t a suggestion—it’s the difference between the 6% of high performers and the 61% stuck in pilot purgatory. Shift investment from purely technical infrastructure (10%) and data/technology (20%) to the human and process transformations (70%) required for scaling.

Protect the talent bench. Don’t aggressively eliminate entry-level roles. Use AI to augment junior workers and ensure they gain tacit knowledge required for future leadership. The career pipeline you preserve today determines your leadership bench in 2030.

Implement robust governance early. Establish audit trails, explainability requirements, and “human-in-the-loop” circuit breakers to prevent autonomous system failures. Build a seven-step AI incident response cycle: Detect, Assess, Stabilize, Report, Investigate, Correct, Verify.

Measure collaboration, not just output. Organizations that optimize for human-AI collaboration are projected to see significantly higher margins and employee satisfaction than those chasing automation alone.

The Question, The Evidence, The Choice

This entire investigation emerged from Venkat Patla’s question during that Northeastern University Branding and AI course: What’s actually more effective—using AI to cut jobs, or using AI to make people more productive?

The evidence is now overwhelming:

Augmentation wins, and it wins big. Companies pursuing augmentation deliver 4x shareholder returns. Early AI adopters see $3.70 to $10.30 in value for every dollar invested. Workers using AI save 2.2 hours per week, with each hour of AI use generating 33% productivity gains. Organizations reinvesting AI-driven productivity into capabilities create compounding cycles of improvement.

The mechanism is the 10-20-70 principle. The 6% of companies achieving 5%+ EBIT impact allocate 10% to algorithms, 20% to data and technology, and 70% to people, processes, and organizational transformation. The 61% stuck in pilot purgatory invert this formula and wonder why nothing works.

The timeline follows the Productivity J-Curve. Initial productivity declines (averaging -1.33 percentage points) separate companies that survive from those that thrive. Firms that maintain investment through the 12-24 month adjustment phase reach the harvesting phase where the 4x advantage fully manifests.

But the data also reveals the cost of getting this wrong:

80% of AI initiatives fail. Fifty-five percent of employers regret their AI-driven layoffs. Half will quietly rehire. The career pipeline for entry-level workers in AI-exposed fields is collapsing. And companies are spending $644 billion this year on a technology most of them fundamentally misunderstand.

For Patla—operating in wealth management where client relationships compound over decades and trust is earned through years of demonstrated judgment—the question isn’t academic. The firms that eliminate entry-level financial advisors to cut costs today may discover they have no one to manage client relationships in 2030. The firms that use AI to make junior advisors more effective will dominate the industry by giving them capabilities that used to require a decade of experience.

The brand implications are equally stark. As Patla knows from transforming brands at institutions like UBS and Edelman Financial Engines, the story you tell about transformation determines whether customers believe you’re enhancing service or degrading it. Klarna learned this the expensive way. IKEA understood it from the start.

The Equation That Matters

In the end, there’s a simple equation that determines success:

Replacement strategies minimize the numerator. You’re literally removing human capability from the equation. Augmentation strategies maximize it. Every person you train to work with AI multiplies the technology’s effect.

The denominator—organizational resistance—is where most strategies collapse. If employees see AI as a threat, resistance approaches infinity and the whole equation crashes to zero. If they see it as a tool that makes them more capable, resistance approaches zero and value approaches infinity.

The 10-20-70 principle governs all three variables. The 10% and 20% determine the technology term. The 70%—people and process—determines both human capability and organizational resistance. Get that 70% right, and the equation compounds. Get it wrong, and no amount of algorithmic sophistication can save you.

You’re standing at the inflection point. Agentic AI is about to rewire workflows at a pace that will make the last three years look quaint. The companies that treated 2024-2025 as a time to “get lean” through layoffs will discover they’ve lost the organizational muscle required for the next phase. The companies that treated it as a time to build capability—the ones who understood the 10-20-70 principle before their competitors—will leapfrog them.

The choice between those two doors doesn’t just determine your next quarter’s earnings. It determines whether your organization survives the decade.

Choose wisely. Honor the 10-20-70 principle. Survive the J-Curve. Aim for the 4x performance gap. The math doesn’t lie. And the evidence is already piling up—written in quarterly reports, employee surveys, customer satisfaction scores, and the quiet rehiring plans most companies hope their competitors never discover.

The future belongs to the augmenters. The question Venkat Patla posed to that Northeastern classroom has an answer now, backed by $644 billion in corporate experiments, hundreds of case studies, and the growing performance gap between those who get it and those who don’t.

The answer: augmentation wins. Not because it’s morally superior, though it is. Not because it’s easier, because it isn’t. But because it’s the only strategy that compounds. And in a world where AI capabilities are doubling every eighteen months, the only way to keep up is to make your human capabilities compound at the same rate.

That’s the 4x difference. That’s the 10-20-70 principle. That’s the choice.

Make it count.

About Venkat Patla:

Venkat brings extensive branding and marketing expertise from both agency and corporate environments, with deep experience in financial services and wealth management. His career spans leadership roles at:

Leo Burnett (agency work)

Edelman Financial Engines (Senior Creative Director - led brand strategy transformation for the country’s largest independent wealth advisor with $300B+ AUM)

UBS Wealth Management (led marketing for private wealth management and client digital initiatives)

Publicis (worked with major clients including Avis, BNY Mellon, and London Stock Exchange)

Since joining RWA Wealth Partners in 2023, he has been instrumental in elevating the brand and client experience for one of the nation’s largest woman-led registered investment advisers (managing $19.7B+ in client assets as of late 2025).

Venkat holds a B.S. in Mechanical Engineering from Osmania University and an M.S. in Advertising and Communications from the University of Illinois Urbana-Champaign.

LinkedIn: https://www.linkedin.com/in/vpatla/

Cutting jobs to show AI 'progress' is just cost reduction with better PR. The Klarna story says it all; you save on headcount, then spend more fixing what broke. Augment, don't amputate.