The Eightfold AI Match Score

Just wondering what Dunder Mifflin might look like in 2026?

It learned from companies like Dunder Mifflin. It learned from managers like Michael Scott. It did not know that Michael was the problem. It thought Michael was the signal.

The case starts here. Eightfold AI trained its hiring algorithm on real decisions made by real managers — which means it learned everything those managers got wrong. Toby Flenderson has been trying to document this for fifteen years. Nobody listened. Now it's running at scale.

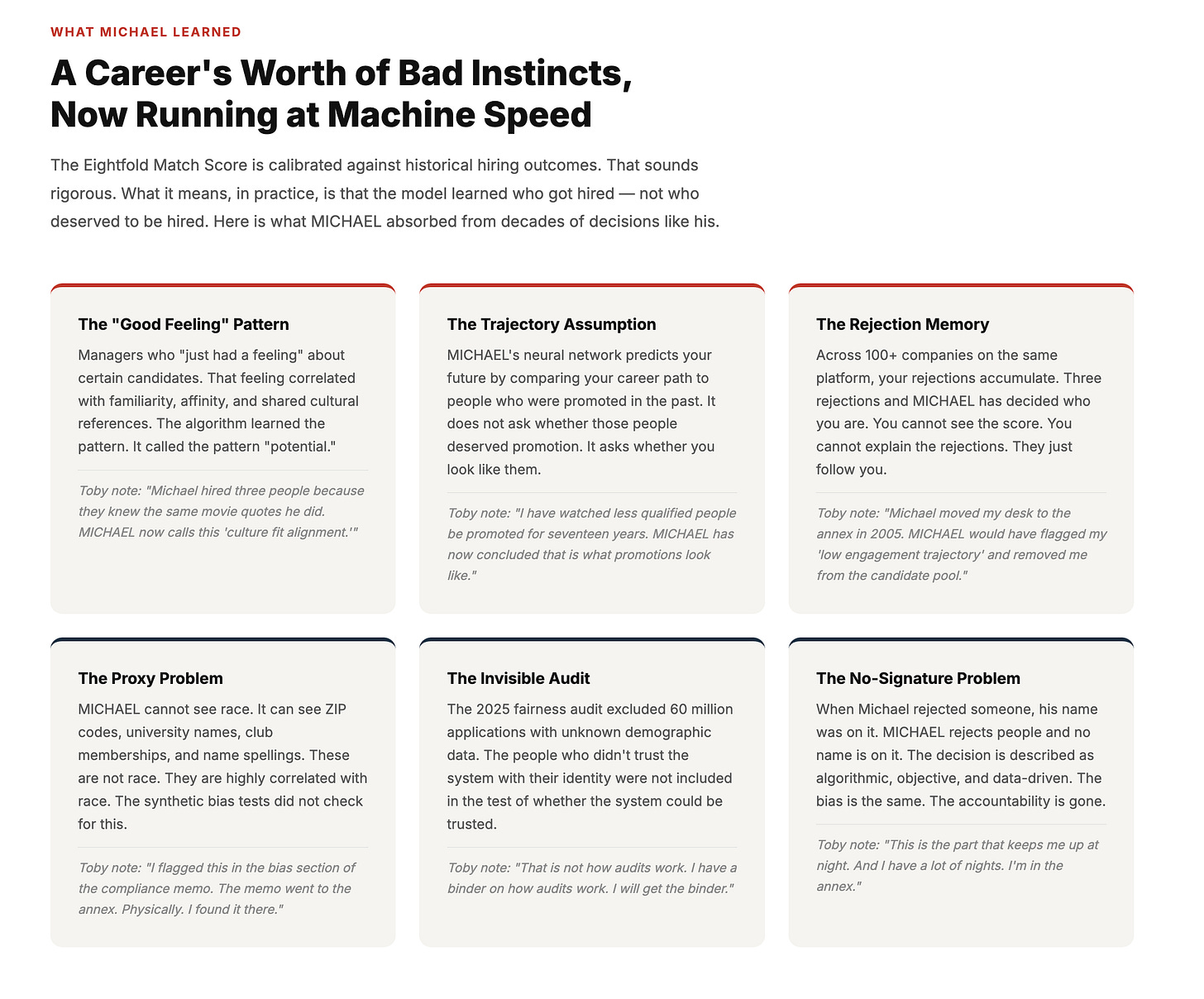

The algorithm didn't introduce new biases. It inherited the old ones and removed the signature. Here is what MICHAEL absorbed — and what Toby's notes say about each pattern.

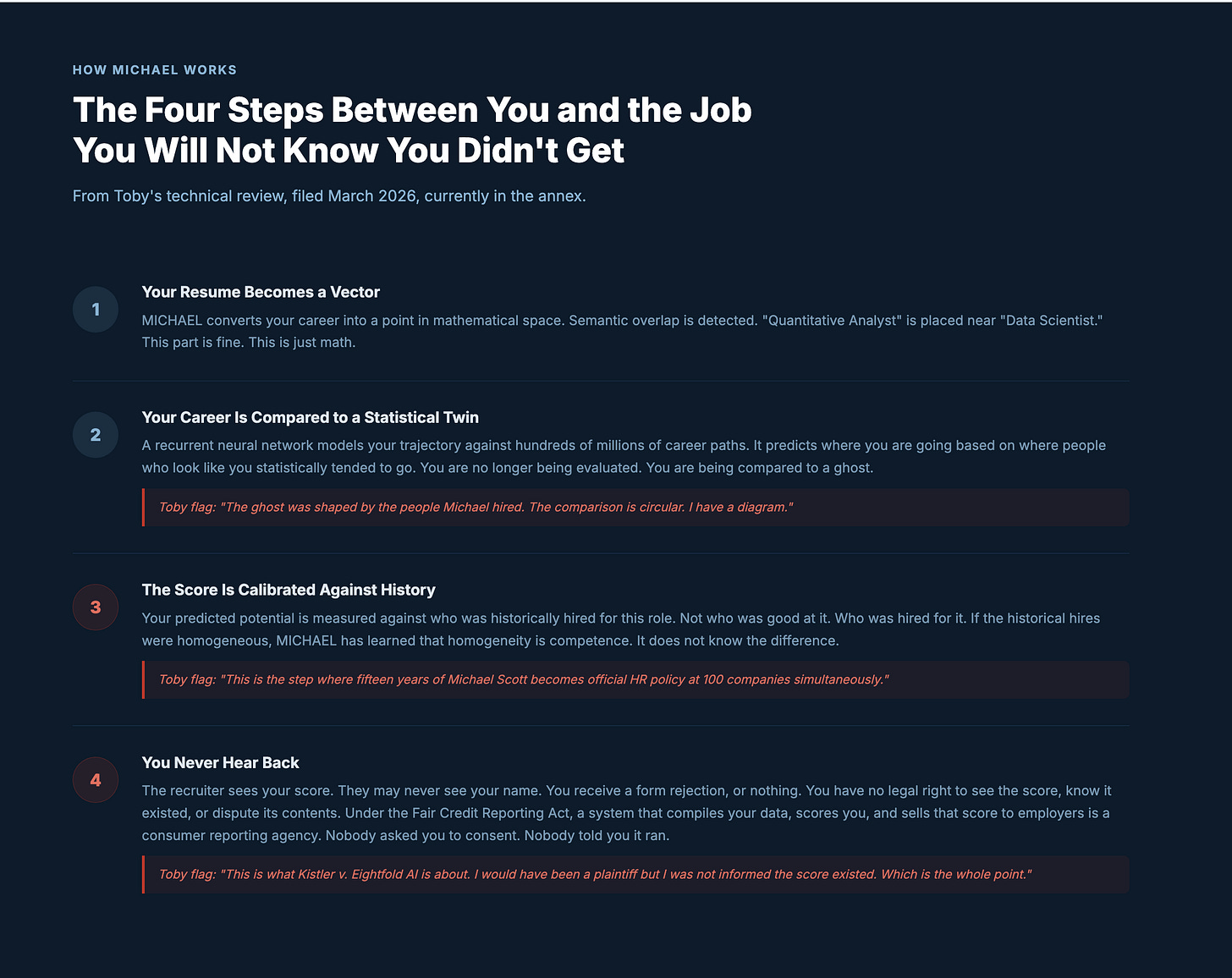

Four steps stand between your application and a human being who might actually read it. Most candidates never make it through. From Toby's technical review, filed March 2026, currently in the annex.

Toby filed this memo in March 2026. He filed three copies. Zero were read. The requests inside — disclose the score, allow disputes, fix the audit — are the same things the Kistler lawsuit is now asking a federal court to require.

This is the sentence that matters. The model is not malfunctioning. It is doing exactly what it was trained to do. The training data was us. That was always going to be the problem.

Tags: Eightfold AI Match Score FCRA, algorithmic hiring consumer report, Kistler v. Eightfold AI 2026, talent intelligence platform bias audit, recurrent neural network career trajectory hiring