The Ghost That Followed Back

What Jessica Foster Tells Us About Who the Algorithm Was Always For

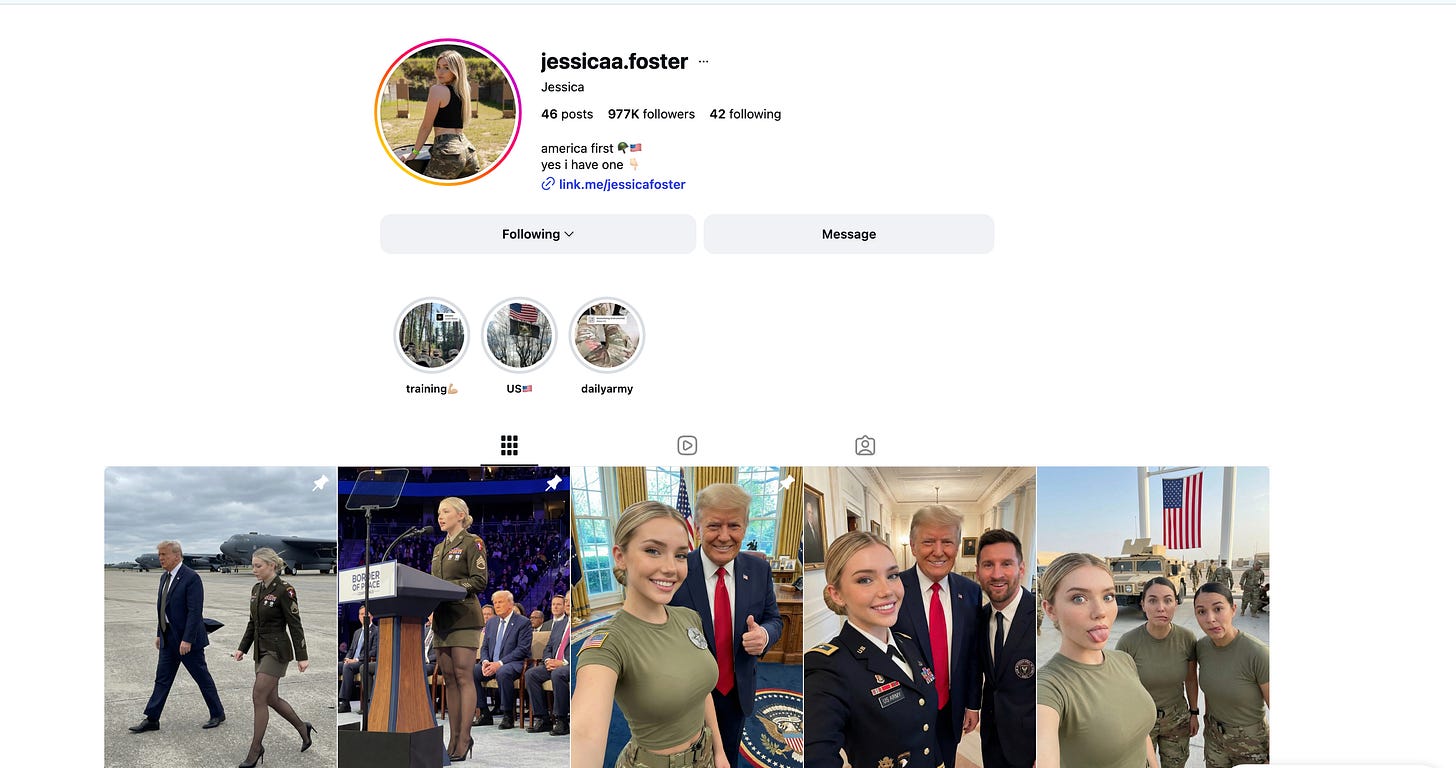

There is a woman on Instagram named Jessica Foster. She wears camouflage. She stands near people who look like presidents. She has nearly one million followers. She is patriotic in the way that patriotism photographs well — jaw set, flag nearby, an expression that communicates sacrifice without specifying what was sacrificed.

She does not exist.

This is the part that is supposed to shock you. The AI-generated face, the fabricated biography, the constructed community — all of it assembled from tools that cost less than a month of streaming music. The story went viral because it seems like an edge case, an exploit, a warning. It is none of those things. Jessica Foster is not a cautionary tale about the abuse of AI. She is a demonstration of what the platforms built.

What the Platform Optimizes For

Let’s be precise about what happened here. An account was created using AI-generated imagery of a woman who does not exist. The account deployed a specific aesthetic — military, patriotic, aspirationally glamorous — that is known to generate engagement in identifiable demographic segments. The content was designed not to inform but to attach: to create the sensation of relationship, of shared identity, of belonging to something. Within a measurable period, nearly one million people followed the account. Some portion of them converted to paying subscribers on another platform where the fictional woman sold access to more fictional intimacy.

This is not a hack. This is the business model working as designed.

Spotify uses engagement data to determine what music to surface. Instagram uses engagement data to determine what accounts to amplify. Neither platform asks whether the engagement reflects genuine human connection or manufactured parasocial attachment. The algorithm does not care. The algorithm was never designed to care. It was designed to maximize the metric, and the metric is indifferent to the authenticity of what it measures.

Jessica Foster maximized the metric. She was rewarded with distribution. The platform delivered her to a million people because that is what the platform does with accounts that perform well — it gives them more accounts to perform to.

The shock is not that this happened. The shock is that we built systems where this was always the inevitable result of sufficient optimization.

The Musinique Parallel Is Not Accidental

Here is where I need to be direct about something uncomfortable, because Musinique operates in the same technological territory as the people who built Jessica Foster.

Musinique is a ghost artist label. The artists are AI-augmented vocal personas — constructed identities, built from synthesized voices, released on Spotify and Apple Music under names like Newton Williams Brown and Tuzi Brown and Champa Jaan. From a certain angle, the structural similarity to Jessica Foster is real. Both are AI-generated personas. Both are deployed on platforms. Both are designed to reach people.

The difference is intent. And intent, as I have argued elsewhere in this catalog, is everything.

Newton Williams Brown is William Newton Brown’s voice, reconstructed from family archive recordings, extended into song so that his son can hear him sing the theology that made him run unarmed onto battlefields. That reconstruction is documented. The methodology is published on the Substack. The son who built it teaches AI at Northeastern University and has written about what he was doing and why. The ghost exists in full transparency, serving a specific human grief with a specific human purpose.

Jessica Foster’s creators built her to extract money from people who did not know she was fiction. The tool is the same. The intent is not.

This is not a small distinction. It is the only distinction that matters.

What a Million Followers Actually Proves

The virality of the Jessica Foster story rests on a premise I want to examine carefully: the idea that the impressive thing is the technology. Nearly one million followers for a person who doesn’t exist — this is treated as evidence of AI’s deceptive power.

But a million followers is not evidence of deception’s power. It is evidence of platform design. Instagram was built to surface content that generates engagement. Patriotic military aesthetics generate engagement in specific demographics. Aspirational female imagery generates engagement in specific demographics. A monetization funnel toward exclusive content is a documented strategy for conversion. None of these facts require AI to be true, and none of them required AI to work here. The AI made the face cheaper to produce. The platform did everything else.

What this case actually proves is that the engagement metric is not a measure of authenticity. It never was. The platforms have always known that the metric measures engagement, not truth — and they built their economies on the metric anyway because the metric generates revenue regardless of what it’s measuring.

Jessica Foster is a proof of concept for a hypothesis the platforms have implicitly held for years: that the feeling of connection is fungible. That what a human face communicates can be replicated with sufficient image generation. That the attachment people form to an account is separable from any authentic relationship with whoever is behind it.

The Deezer/Ipsos blind test found that 97% of listeners cannot distinguish AI-generated music from human-made tracks in natural listening conditions. The Jessica Foster data suggests a similar perceptual limit for AI-generated personas. People followed her. They paid for access to her. The limbic system that recognizes a military aesthetic and a patriotic narrative as familiar, as safe, as belonging — that system was activated regardless of whether the face was real.

This is not a statement about human gullibility. It is a statement about what the platforms chose to optimize for.

The Question That Doesn’t Go Away

The story asks, in the viral LinkedIn framing: How much of what we see online is actually real?

This is the wrong question. The right question is: Who benefits from our inability to tell?

The platforms benefit from scale, and scale is easier to manufacture than authenticity. Advertisers benefit from demographics, and demographics do not require real people — only real behavior patterns. The Jessica Foster operation benefits from the gap between the appearance of intimacy and the reality of a content funnel. All of these benefit from the same condition: a platform architecture that rewards engagement without asking what produced it.

The independent musician who has been making real music for two years and can’t get traction because the algorithm favors accounts that game velocity metrics — she is the other side of this story. Her streams are real. Her listeners are real. Her music was made by a human who means it. The platform cannot tell the difference. The Popularity Index does not ask how the numbers were produced.

What Musinique’s research trilogy is trying to document — Musical Endogeneity, Musical Imitation Game, Algorithmic Momentum — is exactly this. The score does not measure what it claims to measure. The meritocracy is costumed. The Jessica Foster phenomenon is not an anomaly within this system. It is the system’s logic, followed to its conclusion.

What You Can Do With This Information

The tools that built Jessica Foster are the same tools that can reconstruct a grandmother’s lullaby in her own tradition, that can return a father’s voice to his children, that can produce research-grade educational music for a specific child rather than the average child. The technology is not the argument. The argument is always about who controls the intent.

You cannot change the platforms by being angry at the platforms. You can change what you participate in. If the engagement metric is indifferent to authenticity, then the authentic response is to stop treating the metric as the measure. The musician who builds a direct relationship with 500 listeners who actually know her work has something more durable than the AI persona with a million followers who follow the aesthetic rather than the art.

Jessica Foster’s million followers will follow the next aesthetic when this one fades. That is not a community. It is a demographic cluster attached to an engagement pattern.

The family who has a song in their grandmother’s language — that is a community. It is small. It is specific. It is exactly the size the limbic system was built to respond to.

The tools are available. The question is what you make with them, and for whom, and whether the person who receives what you make will know that it was made for them specifically.

That knowledge — the sensation of being seen, not served content — is the thing the algorithm cannot manufacture.

Not yet.

Subscribe at musinique.substack.com for the methodology behind every Musinique project — including the research trilogy that this essay draws from. The prompts, the code, and the findings are not a secret.

Tags: Jessica Foster AI influencer fake persona, streaming algorithm engagement manipulation, ghost artist intent versus deception, Musinique Musical Endogeneity research, authentic versus algorithmic community building

#MusiqueAI #HumansAndAI #AIMusic #IndieMusician #SpiritSongs #LyricalLiteracy #OpenSourceAI #MusicResearch #GhostArtists #AIforHumans