The Human Half: What AI Can't Do — Causal Reasoning

Proposed "What AI Can't Do" series of AI courses

Note on the What AI Can’t Do” series of AI courses

The intelligent response to a forklift is not to practice lifting heavier objects.

Machines are superhuman at arithmetic — not faster-than-average, but faster than any human who has ever lived, by orders of magnitude, without fatigue, without error. They are superhuman at fact retrieval. They are superhuman at syntactic correctness. They are increasingly capable at pattern recognition across domains where training data is dense and success criteria are well-defined.

None of this should frighten an educator. All of it should reorganize one.

The forklift does not make the human obsolete. It makes the human who cannot operate a forklift obsolete, while making the human who can operate one dramatically more capable. The intelligent response is to learn to operate it, maintain it, understand what it can and cannot lift — and most importantly, to develop the judgment to know what needs lifting in the first place.

We are in the early years of the most powerful cognitive forklifts ever built. The curriculum is still teaching students to lift with their backs.

The Human Half is a graduate course series built on that reorganization. Not what machines can’t do — what humans need to develop to work alongside machines that can do so much. The judgment layer. The causal reasoning layer. The capacity to know when a result should be trusted and when the tool has been handed a question it cannot answer.

The first course — Causal Reasoning — teaches engineers to build and defend the causal models that AI estimation tools require humans to supply. The identification layer: constructing the graph, choosing what to condition on, defending the assumptions. The part no algorithm performs. The part that determines whether a causal AI tool produces a result that means something or a result that merely looks like it does.

The thinking behind the series is at theorist.ai — where I’ve been building a taxonomy of the human intelligences the AI era most urgently requires us to teach, and asking what it would look like to actually teach them.

The curriculum is coming. The first course is in development at Northeastern University’s College of Engineering.

The Human Half: What AI Can’t Do — Causal Reasoning

Proposed Graduate Course | College of Engineering | 4 Credit Hours

WELCOME

I’ve spent years watching capable engineers make confident causal claims from data that couldn’t support them — not because they weren’t smart, but because no one had ever taught them the layer of reasoning that sits between the data and the claim. They knew how to build the model. They didn’t know how to ask whether the model was measuring what they thought it was measuring.

That gap is what this course closes.

Causal AI tools are genuinely powerful. They can estimate effects, run sensitivity analyses, and produce clean output with narrow confidence intervals. What they cannot do is draw your causal graph, choose what to condition on, or defend the assumptions that make a result trustworthy. That layer — the identification layer — requires domain expertise that no algorithm supplies. It requires you to know your field well enough to argue, in writing, why the arrows in your causal model point the way they do. No training data replaces that. No model learns it. It is the Human Half.

This course teaches you to perform it.

Students leave able to build a defensible causal model for a problem in their own engineering domain, hand it off to an estimation tool with confidence, and evaluate whether the result should be trusted. More importantly, they will be able to answer — clearly, in a job interview or a boardroom — the question that separates engineers who use AI well from engineers who use it confidently and incorrectly: “Is what your system is measuring actually causing the outcome, or just correlated with it?”

The course is demanding in a specific way. It does not ask students to memorize frameworks or reproduce procedures. It asks them to make judgment calls, defend them to skeptical peers, and revise them when the argument doesn’t hold. That is harder than a methods course — and more valuable.

THE HUMAN HALF SERIES

We are in the early years of the most powerful cognitive tools ever built. AI systems are superhuman at pattern recognition, fact retrieval, arithmetic, and syntactic correctness. They are genuinely poor at constructing causal models, formulating the right questions, auditing the plausibility of their own outputs, and knowing when not to proceed.

The Human Half series develops exactly those capacities — the forms of reasoning that AI tools require humans to supply, and that graduates who only learned to use the tools will not have.

This course — Causal Reasoning — is the series entry point. It develops one specific, high-value cognitive skill: the ability to build a defensible model of what causes what in a domain, and to know what that model can and cannot support. It is not a course about causal AI tools. It is a course about the thinking those tools cannot do, that engineers will need to do every time they use them.

LEARNING OUTCOMES

By the end of this course, students will be able to:

Distinguish statistical association from causal effect, naming the assumption required to move from one claim to the other

Identify the identification layer within a causal analysis workflow and name decisions within it that require domain judgment

Diagnose a causal claim as well-identified or under-identified, specifying which assumption is load-bearing and where it could fail

Construct a directed acyclic graph (DAG) for a domain problem, correctly placing confounders, mediators, and colliders with every arrow stated as a causal claim

Distinguish confounders, mediators, and colliders by structural position and predict the consequence of conditioning on each

Apply the backdoor criterion to derive a valid adjustment set for a given DAG

Defend the assumptions encoded in a DAG to a skeptical collaborator, with explicit plausibility rankings

Translate a completed DAG into an estimation specification document for a causal tool

Evaluate causal estimation tool output against the original DAG’s assumptions

Design a complete causal analysis plan for a novel domain problem, from DAG construction through output evaluation

Assess whether a causal analysis should be attempted or reported as definitive given the available data and assumptions

COURSE SCHEDULE

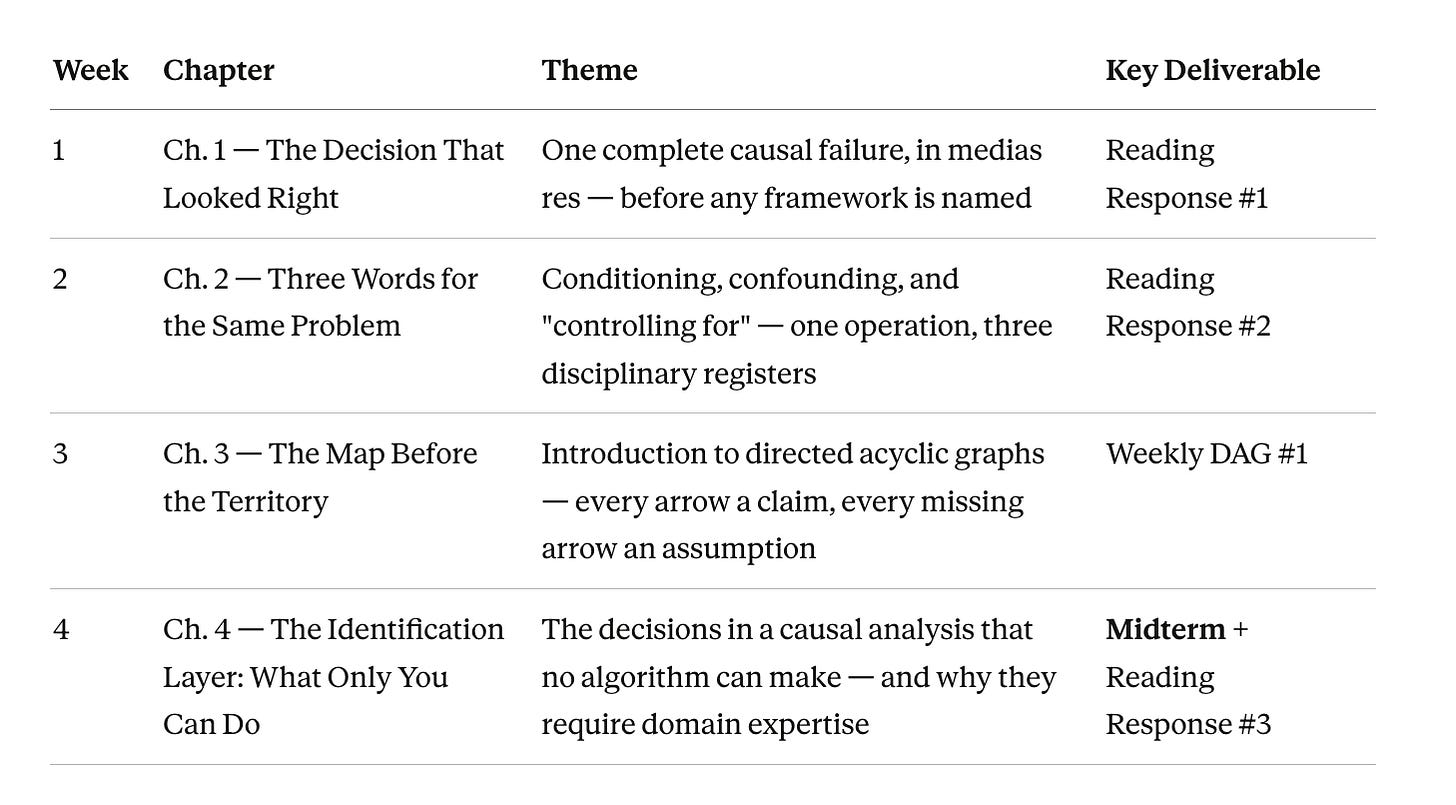

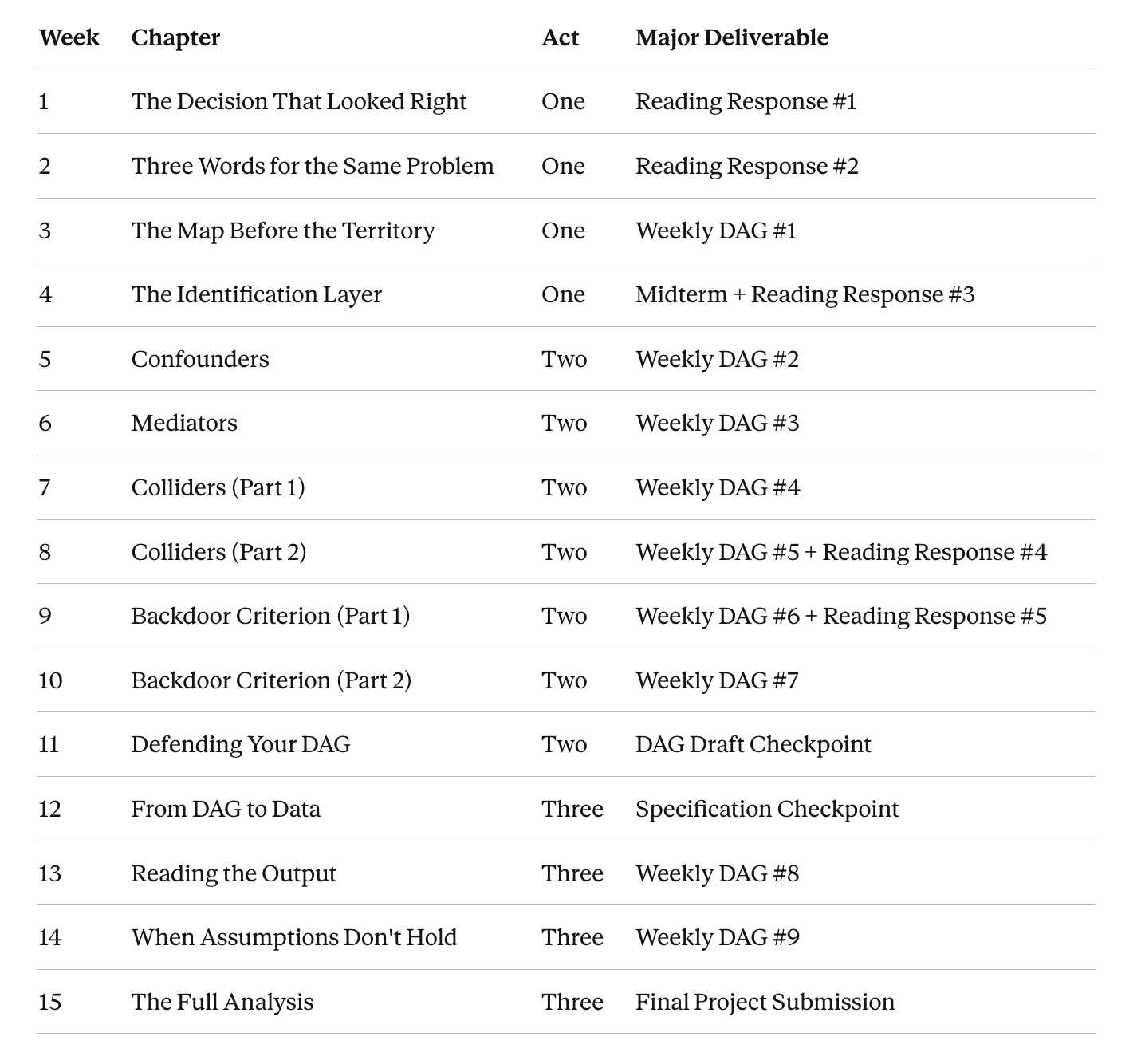

Format: 1× weekly lecture/seminar (75 min each) + 1× weekly DAG In-Class Workshop (75 min each) Text: The Human Half: What AI Can’t Do — A Practical Guide to Causal Inference for Domain Experts, Nik Bear Brown (2026)

ACT ONE — ESTABLISH

What breaks when causal reasoning is absent — and why it matters for the work you already do

Act One transition: students can name the identification layer and sketch a rough DAG for a described scenario. They cannot yet make the identification decisions correctly — that is Act Two’s job.

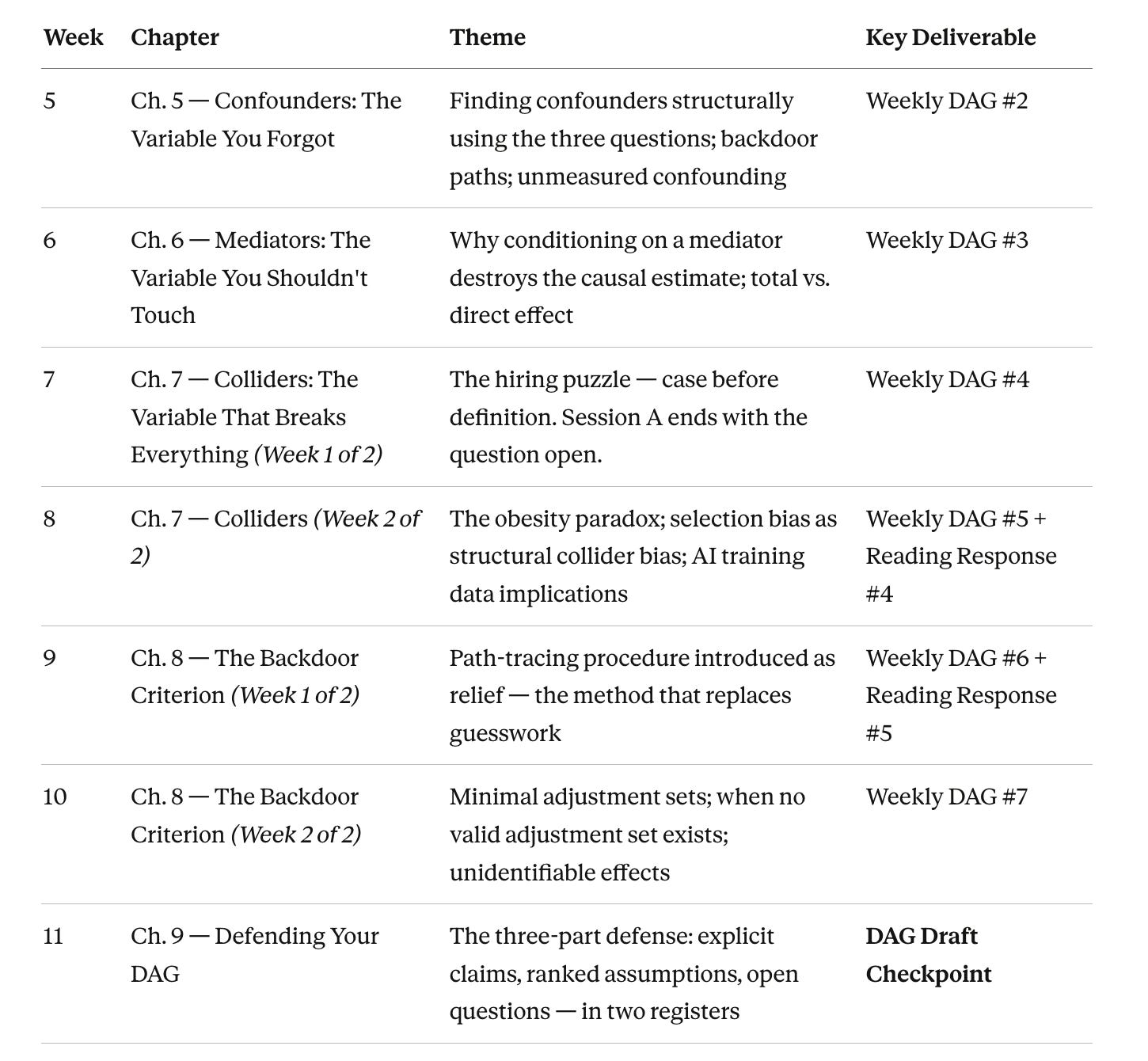

ACT TWO — BUILD

The identification toolkit — built piece by piece through cases you recognize

Act Two transition: students can take an unseen domain problem, draw a defensible DAG, apply the backdoor criterion, produce a valid adjustment set, and defend it in writing. DAG Draft Checkpoint submitted and returned with feedback before Act Three begins.

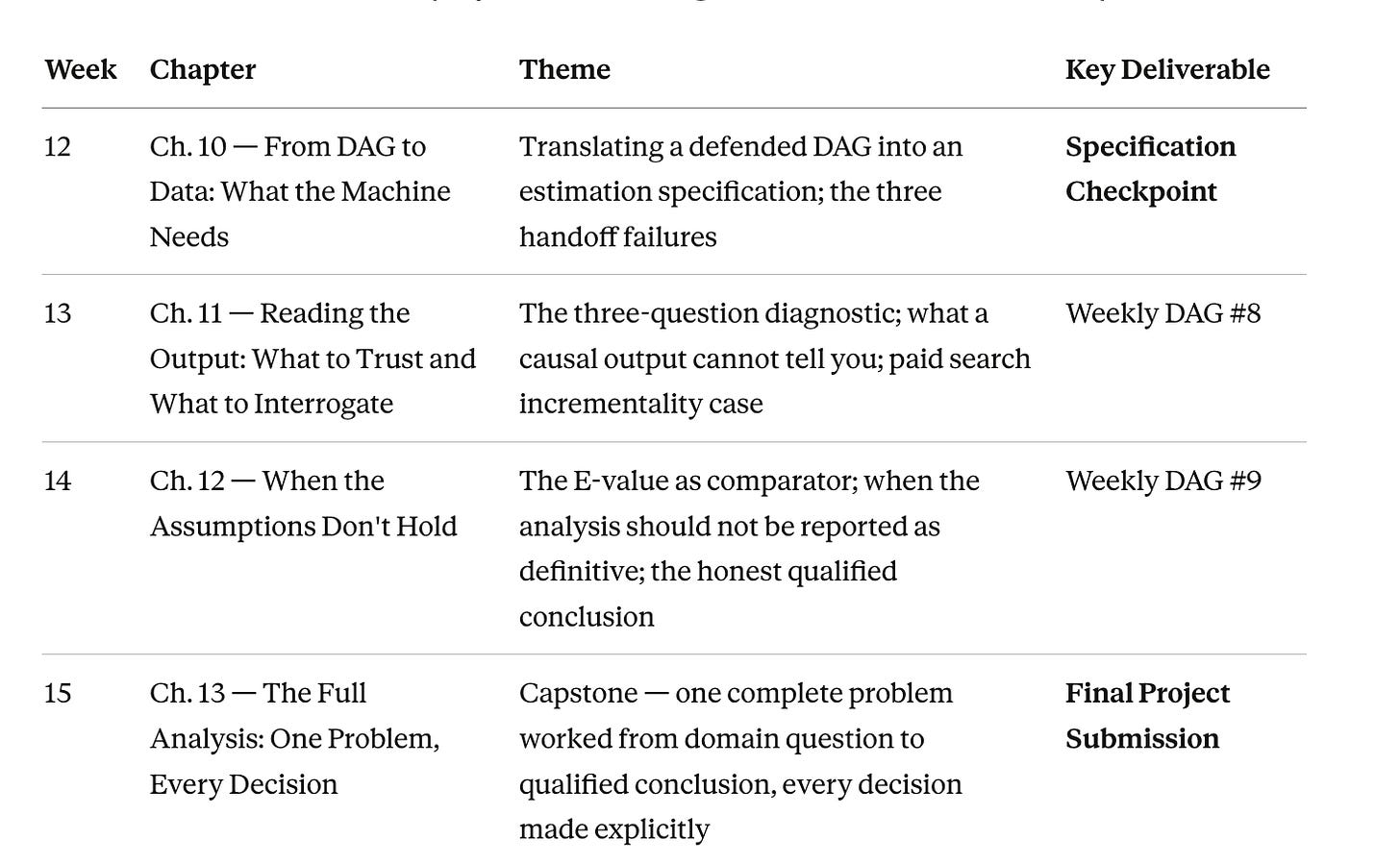

ACT THREE — APPLY

The identification toolkit deployed — answers get less clean, and that is the point

Act Three outcome: students submit a complete causal analysis plan for a problem in their own engineering domain — DAG, assumption defense, estimation specification, output evaluation, sensitivity analysis, and qualified conclusion in two registers. A portfolio piece. A job interview answer.

SCHEDULE AT A GLANCE

The Human Half: What AI Can’t Do — Causal Reasoning Proposed 5000-level graduate course | College of Engineering | Nik Bear Brown Full syllabus available on request