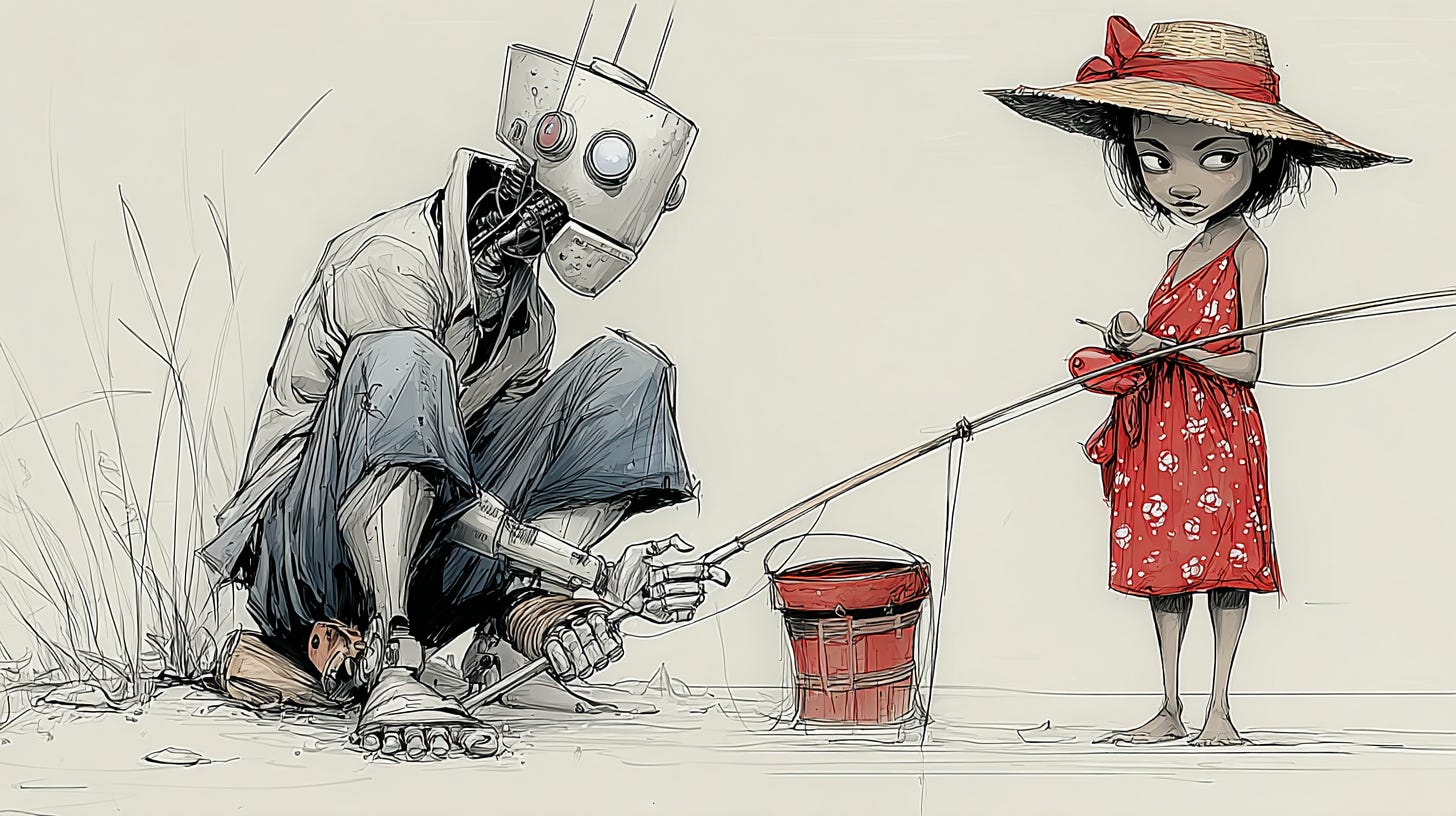

The Robot Tutor and the Fishing Village

What "Personalization" Has Always Meant, and What Adaptive Learning Has Always Delivered

The girl in the Cambodian fishing village was never real.

She was an argument. Between 2013 and 2015, José Ferreira, founder of Knewton, invoked her in promotional materials and public statements to describe what his technology could do: a girl in a fishing village, receiving through Knewton’s adaptive engine the same personalized instruction as a student at an elite private school, growing up to invent the cure for ovarian cancer. Educational inequality, in Ferreira’s framing, was a problem that adaptive learning could address at the software layer. The instruction would be what unlocked the capacity. The fishing village was a rhetorical device, not a pilot deployment.

By 2019, Knewton had been acquired by John Wiley & Sons for a sum understood to be a small fraction of its peak valuation. The partnership with Pearson had dissolved. The product that remained — Knewton Alta, a conventional higher-education courseware platform — bore little resemblance to the robot tutor in the sky. The fishing village was still waiting.

I want to examine what happened. Not Knewton specifically, and not Ferreira personally — he was the most articulate spokesman for a framing the whole industry was using, not its author. What I want to examine is the word that Ferreira’s framing deployed, the word that was doing the most rhetorical work in every version of that framing, the word that has survived the collapse of its first generation of spokescompanies and is still doing the same work today.

Personalization.

What the Word Invokes

The word has a history in educational psychology that predates by decades any commercial deployment of adaptive software. Lev Vygotsky’s zone of proximal development is about personalization — the idea that effective instruction operates in the specific zone between what a learner can do independently and what they can do with support, a zone that is different for every learner and that requires a teacher’s specific attention to identify. Lee Cronbach and Richard Snow’s work on aptitude-treatment interactions spent two decades trying to formalize the finding that different learners respond differently to different instructional approaches — that no single method is optimal for everyone, and that the optimal method for a given learner depends on who that learner is. The differentiated-instruction tradition in teacher education has argued for thirty years that good teaching requires knowing students individually, designing instruction around their specific needs, and adjusting in real time to what each student brings and what each student shows.

The construct is real. It has serious empirical and theoretical grounding. When Ferreira said Knewton was personalizing learning, he was invoking this history — pointing at a tradition that educational psychology had spent decades documenting and that every good teacher knows, in the bone, as what it means to actually teach rather than to deliver content.

What Knewton’s technology operationalized was different.

Knewton’s engine was built on two well-established statistical techniques. The first was Item Response Theory, the mathematical framework underlying modern standardized testing, which models the probability of a correct response as a function of a student’s latent ability and an item’s difficulty. The second was Bayesian Knowledge Tracing, which estimates whether a student has mastered a specific discrete skill by updating probability estimates as the student responds to items. Together, these gave Knewton a learner model: a collection of probability distributions over latent abilities and specific skill masteries, updated continuously as the student interacted with the system.

This is real technology. It is not trivial to build. The engineers who built it did substantive mathematical work. Knewton’s claim that its engine operated on sophisticated foundations was true. What was not quite true was the claim about what those foundations amounted to.

The learner model Knewton maintained was expressible, in its technical form, as: the probability this student has mastered skill A is 0.78; the probability this student has mastered skill B is 0.34; the student’s estimated ability on dimension X is 1.2 standard deviations above the population mean. This is useful information for deciding what to present next. It is not a model of the student as a person. It is not a model of their interests, their emotional state, their cognitive style, their cultural background, their creative capacity, their relationship to learning. It is a model of item-response patterns on a bank of pre-authored content.

The gap between we know this student better than their parents and our model assigns probabilities to their mastery of skills we’ve tagged to a knowledge graph is the central artifact of the adaptive-learning era.

The Fishing Village Made Specific

The girl in the Cambodian fishing village makes the gap visible because the specific nature of what was claimed and what was possible becomes clear once you name each requirement.

For the girl to receive, through Knewton’s engine, instruction equivalent to an elite private-school education, the technology would need, first, content: a comprehensive curriculum in mathematics, science, language, and humanities, built by human curriculum developers, available in a language she could read, calibrated for her cultural and linguistic context. Knewton licensed pre-authored material from publishers. The content was what the publishers had built and the partnerships had arranged. The engine sequenced content that already existed. Building the content was not what the engine did.

The technology would need, second, an outcome measure capable of telling whether the instruction was producing the kind of understanding that leads to cancer research — conceptual depth, transfer across domains, creative problem-solving, the tacit skills that accumulate over years of serious engagement with scientific thinking. Knewton’s engine could measure item-level response patterns on pre-authored assessments. Whether those patterns indexed what a future researcher would need was not addressed. The engine was not designed to measure the construct the rhetoric invoked.

The technology would need, third, to function in conditions of intermittent electricity, unreliable internet, shared devices, limited home support, a language and cultural context for which the content was probably not designed. Knewton was built for contexts with substantially more infrastructure. The rhetoric invoked the fishing village as a demonstration of reach. The technology had not been deployed there or validated there.

The claim was aspirational. The could was doing substantial work. What was true was that the technology could hypothetically produce this outcome if a great many other things were also true, none of which were Knewton’s responsibility or within Knewton’s control. The fishing village was a vision of what the future might look like if a great many problems that have nothing to do with adaptive sequencing algorithms were solved. It was not a description of what Knewton could actually deliver.

Three Systems, One Pattern

The pattern the Knewton arc illustrates is not Knewton-specific. It appears, in different configurations, across every major adaptive-learning platform that followed.

DreamBox Learning, focused on K-8 mathematics and backed by the strongest external evidence base in the category, has been evaluated by the Harvard Center for Education Policy Research in multiple studies. The evaluations used standardized mathematics assessments over school-year timescales and were conducted by researchers with no affiliation to the company. The findings: effect sizes in the range of 0.10 to 0.15 standard deviations for students using the platform at recommended levels. Real effects. Detectable by rigorous researchers using independent measures. Considerably more modest than the marketing implied. And dependent, in every evaluation, on implementation — on how much classroom time schools actually allocated to the platform. The adaptive sophistication of the software did not substitute for the hours it required.

i-Ready, among the most widely deployed adaptive platforms in American K-12 education, integrates adaptive diagnostic assessment with what the company calls “Personalized Instruction” — a sequence of pre-authored lessons targeted at the student’s estimated level. Critics have noted that the personalization, operationally, consists of placing students at different starting points in a common instructional sequence. Students are still completing pre-authored lessons. They are starting at different points and progressing at different speeds. Whether this is personalization in the sense the word implies — instruction responsive to who the student is — or more honestly adaptive placement within a fixed curriculum, is exactly the question the word is being deployed to avoid asking.

ALEKS, built on Knowledge Space Theory, represents the most theoretically rigorous operationalization in the category. Rather than treating ability as a single number, Knowledge Space Theory maps a domain as a set of discrete items and a learner’s knowledge state as the specific subset of items they have mastered. ALEKS uses an AI engine to efficiently navigate the combinatorial space of possible knowledge states, asking questions that narrow its estimate of where the student is. The resulting ALEKS Pie — a visual display of what has been mastered, what has not, what is ready to learn — is grounded in serious mathematics, specified precisely, falsifiable in principle. It has been evaluated in multiple contexts. Effect sizes fall in the same general range as DreamBox and i-Ready.

What is clarifying about ALEKS is this: even the most theoretically careful operationalization of personalization — one drawing on decades of rigorous mathematical work — models a student’s mastery state over a defined domain of discrete items. It does not model the student’s interests, their emotional state, their cognitive style, their cultural background, their creative capacity, their relationships. ALEKS is honest about this. The documentation says clearly that the system models knowledge states over specific domains. But even ALEKS demonstrates that the gap between the marketing construct and the technical operationalization is not a failure of specific companies. It is a feature of what item-level response tracking can and cannot do.

The Gap and Its Consequences

The word personalization is doing specific rhetorical work. It invokes a construct that educational psychology spent decades building — instruction responsive to the individual learner in the deep sense that Vygotsky pointed at, that good teachers practice, that Cronbach and Snow tried to formalize. The construct is real. The technology operationalizes something narrower: item-level response tracking, probability distributions over mastery parameters, next-item selection from pre-authored content banks, pacing adjustments based on observed response patterns. This is what the data these systems collect and the algorithms they run can actually support. It is not trivial. It is not the same thing as the construct the word invokes.

Three consequences follow.

Critiques of adaptive learning for failing to deliver what the marketing promised are both fair and partially misdirected. Fair because the systems cannot deliver what the rich construct invokes. Misdirected because assigning this to specific companies treats a structural feature of item-level tracking as a product failure. The rhetoric over-promised. The technology delivered what the technology could deliver.

Evaluations of these systems on outcome measures aligned to the item-level tracking are measuring the operationalization, not the construct. They find modest positive effects, which is the honest finding. Whether the same systems produce transfer to novel problems, durable learning over years, growth in dimensions that do not map to any test-bank item — these questions remain mostly unanswered, because answering them would require outcome measures that do not yet exist in the forms evaluators would need.

And the pattern persists. The vocabulary has survived the collapse of Knewton and its generation. When current AI-tutor companies claim to provide personalized tutoring, to adapt to each learner’s needs, to meet students where they are, the claim is doing the same rhetorical work Knewton’s robot tutor in the sky was doing: invoking the rich construct while operationalizing a narrower version. The gap remains where it was.

What to Ask

When you next encounter an educational-technology claim that uses the word personalization, or variants like individualized or adaptive or tailored to the learner or meets each student where they are, two questions will orient you.

What, specifically, is the technical operation? The honest answer for the large majority of systems using this vocabulary is one of a small family: item-level response tracking with adaptive item selection; diagnostic assessment followed by placement in a pre-authored sequence; pacing adjustments based on response patterns; content recommendation from a pre-authored bank based on inferred mastery. If you can name which operation is happening, you have the beginning of an honest account of what the system does. The vocabulary may suggest more. The technical substrate does not support more.

Does the claim invite the listener to believe the system does something the operation does not do? The answer is often yes, specifically in the dimensions educators and parents most hope for. Operationalized personalization — item selection based on mastery estimates — can contribute to instruction responsive to the individual learner, in contexts where it is embedded in the harder relational and responsive work that teachers do. It cannot replace that work. When a product is marketed as though algorithmic item selection substitutes for a teacher’s specific attention to a specific child, the marketing is doing rhetorical work the technology does not underwrite.

The fishing village is still waiting. The girl who will invent the cure for ovarian cancer has not yet received the education the rhetoric promised. This is not primarily Ferreira’s fault, or Knewton’s, or any single company’s. It is the consequence of a gap that was always structural — between what a word can invoke and what a technical operation can deliver — that the field has chosen, for a decade and more, not to name.

Naming it is the prerequisite to closing it.

Nik Bear Brown is Associate Teaching Professor of Computer Science and AI at Northeastern University and founder of Humanitarians AI (501(c)(3)). This essay appears as part of the Computational Skepticism series at skepticism.ai. | theorist.ai

Tags: adaptive learning personalization gap, Knewton IRT Bayesian knowledge tracing operationalization, DreamBox i-Ready ALEKS efficacy evaluation, personalized learning construct versus operation, EdTech rhetoric fishing village critique