The Production Collapse Nobody Saw Coming

A Response to Critics

I published an essay titled “How a Paper Quilling Frog Video Reveals the Future of Personalized Learning.” The piece described a YouTube video I created—five intricately animated paper quilling frogs singing a counting song—and argued that AI tools had democratized educational content creation, making personalization economically feasible for the first time.

The response was immediate. Nina Harris, a Brand Director at Charles Schwab and four decades in major advertising agencies, offered a crucial correction: I had dramatically undersold production costs. What I estimated at $10,000-$50,000 would actually cost $75,000-$150,000 through a professional production house. My claim about cost collapse was too conservative by a factor of three to five.

Then came Dr. Nada Dabbagh’s question. Dr. Dabbagh is Professor Emerita and former Director of the Division of Learning Technologies at George Mason University, one of the world’s leading experts on Personal Learning Environments, with over 12,000 citations and six published books. Her feedback was polite but pointed:

“Hmm, loved the video but not sure how it supports PL? Is because each jumps into the pool? Sorry, I need help seeing the relevance to PL.”

She wasn’t confused. She was challenging the central thesis. When someone of Dabbagh’s expertise says “I need help seeing the relevance,” she’s asking: Where is the personalized learning actually demonstrated? Who is adapting content to individual learners? How does this differ from broadcast content created more efficiently?

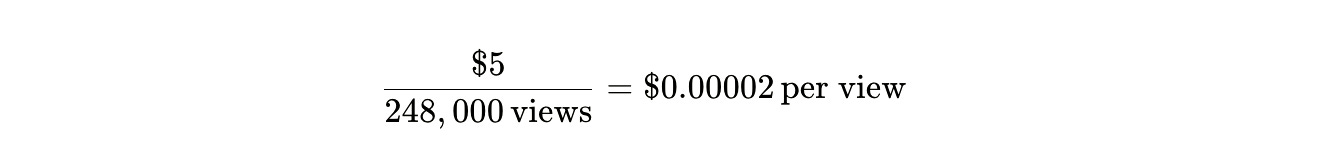

She was right. My video was standardized content viewed by 248,000 people—most of them not children. I hadn’t demonstrated personalized learning. I’d demonstrated efficient content creation using established pedagogical principles.

But her question exposed something more fundamental: I was arguing the wrong case. The story isn’t about proving personalized learning works. The story is about production costs collapsing while maintaining professional quality—a cost reduction so dramatic it eliminates the economic barrier that made personalization impossible.

This is that story.

You’re watching a two-minute video of paper quilling frogs. Five of them, intricately detailed, each one built from thousands of curled paper strips that catch the light like real folded paper. They sit on a speckled log surrounded by fantastical mushrooms with caps that shimmer in impossible colors. The animation has depth—actual three-dimensional space where objects occlude each other properly, where shadows fall where shadows should fall.

The frogs eat bugs. “Yum, yum!” The music pulses at exactly 2 Hz, the frequency researchers have identified as optimal for infant speech processing. One frog jumps. “Glug, glug!” The phonemes are deliberate: the /sp/ cluster in “speckled,” the /gl/ in “glug”—these aren’t accidents. These are the amplitude rise times that decades of research have shown build phonological awareness in preschoolers.

The frogs count backward. Five becomes four becomes three. This isn’t just whimsy—backward counting activates different prefrontal cortex regions than forward counting, engaging executive function development that forward sequences don’t touch. The video ends not with depletion but with resolution: the frogs thrive together in the pool, a narrative arc that triggers dopamine release associated with story completion, providing emotional integration that the traditional version lacks.

You’ve watched something that looks professionally produced because it is professionally produced. The question is: what did it cost?

The Fifty-Year Experiment

Before we answer that question, you need to understand what we already know about educational multimedia. Not what we suspect, not what we hope, but what fifty years of research has definitively established.

In 1969, the Children’s Television Workshop launched an experiment. The United States had a problem: children from low-income households were arriving at kindergarten eighteen months behind their wealthier peers in vocabulary and basic numeracy. Benjamin Bloom’s research suggested that half of a child’s lifetime intellectual capacity forms by age five, yet early childhood education remained chronically underfunded. The achievement gap wasn’t narrowing—it was widening.

The experiment was called Sesame Street, and it was designed using a revolutionary integration of cognitive science and media production. Educational Testing Service—the organization that administers the SAT—was hired before the first episode aired to design measurement protocols. Researchers tested segments on children using the “distractor method”: one screen showed Sesame Street content while another displayed random images. If children’s eyes wandered to the distractors more than twenty percent of the time, segments went back for revision.

The results weren’t subtle. Early randomized controlled trials found that children with access to the show experienced a 0.36 standard deviation increase in Peabody Picture Vocabulary Test scores. To contextualize that number: it’s the difference between the 50th percentile and the 64th percentile. It’s comparable to the gains Head Start was producing through intensive in-person preschool.

But Sesame Street cost $5 per child per year. Head Start cost $7,600.

The longitudinal data got stranger. Researchers tracked 570 children who had watched educational programming as preschoolers and re-contacted them as adolescents. The Anderson Recontact Study found that early exposure to educational media predicted higher high school grades in English, math, and science—even after controlling for parental education, family size, and socioeconomic status. Boys who watched Sesame Street as preschoolers showed lower levels of physical aggression a decade later. Viewers scored higher on creative thinking assessments. They read more books for pleasure.

The international evidence replicated. A meta-analysis by Mares and Pan synthesized 24 studies involving more than 10,000 children across 15 countries. The overall effect size: 0.29 standard deviations for learning outcomes. For children in low-socioeconomic environments, the effect jumped to 0.41 standard deviations—roughly equivalent to moving from the 50th percentile to the 66th.

In Kosovo, children who watched the localized co-production were 74 percent more likely to express positive attitudes toward children from different ethnic backgrounds. In Bangladesh, four-year-olds who frequently watched performed at cognitive levels equivalent to five-year-olds who didn’t watch. In Tanzania and South Africa, viewers showed significant gains in HIV/AIDS knowledge.

This wasn’t vibes-based pedagogy. This was Richard Mayer’s Cognitive Theory of Multimedia Learning playing out at population scale. Mayer’s research—over 200 experiments spanning four decades—demonstrated that well-designed multimedia instruction produces a modality effect with an effect size of approximately 1.13 standard deviations. The mechanism is dual-channel processing: visual information enters through one cognitive pathway, auditory information through another. When synchronized properly, this distributes cognitive load across both channels, leaving more working memory available for integration and knowledge construction.

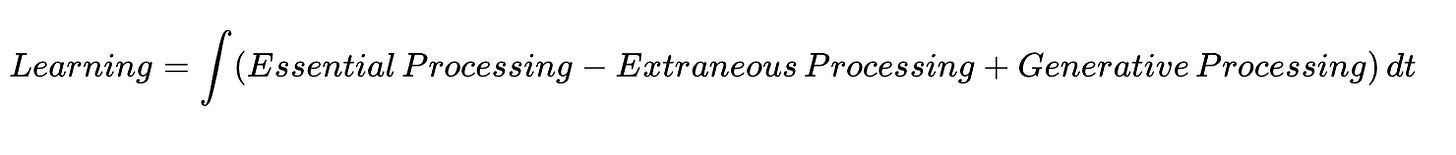

The equation is elegant:

Educational multimedia optimizes this integral by minimizing extraneous processing—the cognitive effort wasted on poorly designed interfaces or confusing layouts—and maximizing generative processing, the deep cognitive work of building mental models.

The multi-billion dollar investment in educational media over the past five decades wasn’t speculation. It was a response to overwhelming evidence that it worked. When content adhered to research-based design principles—proper pacing, dual-channel optimization, narrative coherence, phonemic diversity—children learned. Measurably. Persistently. At scale.

The Traditional Reality

Now meet Nina Harris.

She served as Brand Director at Charles Schwab, managing the production of more than 10,000 images, leading teams of twenty creative professionals, and building Schwab’s entire photography department from the ground up. Before that: creative lead across major advertising agencies—Publicis, Saatchi & Saatchi, McCann-Erickson—where she held roles from Art Director to Group Creative Director, managing budgets for clients including Hewlett-Packard, Sony, Nikon, Wells Fargo, and the California Milk Advisory Board.

When Nina Harris evaluates production costs, she’s not estimating. She’s recalling line items from purchase orders she actually signed.

Her assessment of the paper quilling frog video was immediate and specific:

Five years ago, through a production house, this would have cost $75,000 to $150,000 minimum and required three to six months of production time.

Here’s the breakdown she provided:

Pre-production: 3-4 weeks for concept development, storyboards, client approvals.

3D modeling team specializing in organic forms with this level of detail: $15,000 to $25,000.

Texture artists creating thousands of individual paper curl elements: $20,000 to $30,000.

Rendering farms processing the 3D animation at broadcast quality: $10,000 to $20,000.

Music production with session musicians and child voice actors trained in child-directed speech: $10,000 to $15,000.

Post-production: color correction, final approvals, delivery in multiple formats: $15,000 to $25,000.

Agency markup and project management: 20-30 percent on top of direct costs.

This isn’t inflated. This is standard. This is what professional production cost when it required professional production teams.

The cost structure created an absolute barrier. At $75,000 per video, educational content production was possible only for institutions. School districts could afford it. PBS could afford it. The Children’s Television Workshop could afford it.

Families could not.

The economic constraint forced standardization. Every child got the same frogs, the same log, the same bugs, because custom variants would cost $75,000 each. Personalization wasn’t expensive—it was impossible.

December 10, 2025

On a Tuesday in December, a video appeared on YouTube. It featured five intricately detailed frogs rendered in paper quilling aesthetic. The production values matched Nina Harris’s professional standards—the values she spent four decades learning to evaluate and two decades managing budgets to achieve.

The video was created by Nik Bear Brown, an Associate Teaching Professor at Northeastern University with a PhD in Computer Science from UCLA and postdoctoral training in Computational Neurology at Harvard Medical School. He produced it through his company Musinique, under the artistic name Mayfield King, as part of a project called Lyrical Literacy.

The total cost: $5 in API credits.

The total time: 5 hours.

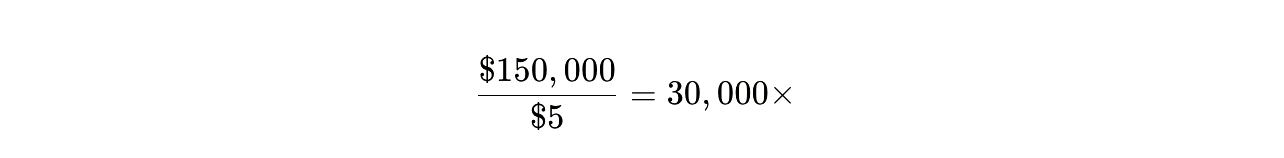

The cost reduction wasn’t incremental. It wasn’t “20 percent cheaper” or “half price.” The reduction was:

On the low end. On the high end of Nina’s estimate:

The time compression was equally dramatic:

This isn’t automation improving efficiency within an existing process. This is the process itself collapsing to effectively zero marginal cost while maintaining professional output quality.

The Pedagogical Architecture

The video didn’t achieve professional production values by accident. Brown implemented the same evidence-based design principles that Sesame Street validated:

Backward counting structure: Engages prefrontal cortex regions associated with executive function that forward counting doesn’t activate. The research base for this is extensive—backward counting requires inhibition of the natural forward sequence, creating cognitive load in precisely the regions associated with self-regulation and planning.

2 Hz rhythmic pattern: The musical accompaniment pulses at 2 Hz, matching the frequency identified in magnetoencephalography studies as optimal for infant speech processing. Ten-month-olds with strong neural tracking of 1-3 Hz delta rhythm develop larger vocabularies at 24 months.

Phonemic diversity: “Speckled frogs” isn’t random. The /sp/ consonant cluster, the /k/ and /l/ sounds—these create specific amplitude rise times critical for phonological awareness. “Glug glug” provides different phonemic structure. The diversity is deliberate.

Narrative resolution: The traditional version ends with “then there were no green speckled frogs”—a narrative of depletion. Brown’s version adds: “Each one took a dive, and they’re swimming, feeling alive, down in the pool, oh how they thrive!” Research on pre-verbal mother-infant interactions shows that infants increasingly understand narrative structure between 4-10 months, and completion of narrative arcs correlates with enhanced positive affect.

Visual depth and texture: The paper quilling aesthetic creates actual three-dimensional space through layered curls. This activates stereoscopic vision and enhances visual cortex engagement with spatial relationships—the same neural substrate involved in mathematical spatial reasoning.

Can Brown claim this specific implementation has been validated through the Children’s Television Workshop’s formative research process? No.

Can he claim viewers are experiencing the documented learning outcomes Sesame Street produced? No.

Can he claim he implemented established pedagogical principles using professional production values while collapsing the cost structure by four orders of magnitude? Yes.

The Population That Became Accessible

There are currently between 3.7 and 4.2 million homeschooled children in the United States.

That number isn’t stable—it’s growing at 4.9 percent annually, nearly triple the pre-pandemic rate of roughly 2 percent. In 36 percent of states that report data, homeschool enrollment in 2024-2025 reached the highest levels ever recorded, exceeding even the pandemic peaks.

These families currently spend between $700 and $1,800 per child per year on educational curricula and materials. The total market: $2.6 to $6.7 billion annually. Forty-eight percent of homeschooling households have three or more children, multiplying both the costs and the complexity of providing age-appropriate, interest-aligned content.

The constraint these families face isn’t pedagogical uncertainty. The evidence base for educational multimedia is established. The constraint is economic access to production capability.

At Nina Harris’s professional assessment of $75,000 to $150,000 per video, exactly zero homeschooling families could afford custom educational content. Not “very few” or “only the wealthy”—zero. The math doesn’t work at any income level. A family with three children, each obsessed with different animals, would need $225,000 to $450,000 to produce custom counting videos for each child.

The economic barrier forced these families to use standardized curricula not because standardization is optimal, but because customization was impossible.

Now consider the changed equation:

Traditional model:

Family with 3 children

Annual curriculum cost: $2,100 to $5,400

Customization: Impossible at any price

Current model:

Same family with 3 children

Create “Five Little Speckled Cats” for child #1 (cat-obsessed): $5

Create “Five Little Shiny Trucks” for child #2 (truck-obsessed): $5

Create “Five Little Spotted Dinosaurs” for child #3 (dinosaur-obsessed): $5

Total cost: $15

Annual savings: $2,085 to $5,385

The marginal cost of variants isn’t just low—it’s effectively zero. Creating “Five Little Speckled Cats” costs the same $5 as “Five Little Speckled Frogs” because the template, the tools, and the process are identical. Only the variable changes.

Answering Dr. Dabbagh

Dr. Dabbagh was right to question whether my original essay demonstrated personalized learning. It didn’t. What it demonstrated was something different—and arguably more important.

The video showing 248,000 views isn’t evidence of personalized learning happening. Most viewers aren’t children. Most aren’t using it as part of structured education. It’s standardized content consumed passively, exactly like traditional broadcast media.

But here’s what the production process demonstrates: The capability that justified billions in investment—evidence-based multimedia instruction at professional quality—has become accessible to individuals at near-zero cost.

This doesn’t prove that “Five Little Speckled Cats” works better pedagogically than “Five Little Speckled Frogs.” It proves that creating “Five Little Speckled Cats” no longer requires institutional backing.

The distinction matters. Personalized Learning Environments, as Dr. Dabbagh’s research defines them, are learner-driven systems where individuals direct their own learning, connect to social networks, and engage in self-managed education. My video is none of those things.

But it is a demonstration that the economic constraint preventing families from creating customized educational content—content that could potentially be integrated into true PLEs—no longer exists.

The question isn’t “Have I proven PLEs work better?” The research literature on that is extensive and ongoing.

The question is: “What happens when the production capability that was restricted to institutions becomes universally accessible?”

The Comparison That Matters

You need to understand what’s being claimed here and what isn’t.

Not claimed:

That “Five Little Speckled Cats” is pedagogically superior to “Five Little Speckled Frogs”

That AI-generated content has undergone Sesame Street’s formative research validation

That 248,000 YouTube views equal 248,000 children experiencing learning outcomes

That this video demonstrates personalized learning in the academic sense Dr. Dabbagh uses the term

What is claimed:

Educational multimedia with proper design produces measurable learning gains (50 years of evidence)

Production values that previously required $75,000-$150,000 can now be achieved for $5 (Nina Harris’s professional assessment)

The pedagogical principles Sesame Street validated can be implemented by individuals, not just institutions

The economic barrier that made customization impossible no longer exists

This is the parallel to Sesame Street’s original innovation:

Sesame Street (1969):

Proved educational multimedia could deliver Head Start-level outcomes

At $5 per child per year instead of $7,600

Cost reduction: 1,520×

Made quality educational content accessible at population scale through broadcast

AI-generated content (2025):

Proves professional production values can be achieved individually

At $5 total instead of $75,000-$150,000

Cost reduction: 15,000-30,000×

Makes quality educational content accessible for customization through digital tools

Both innovations are about maintaining quality while collapsing cost barriers. Sesame Street didn’t need to prove television was pedagogically superior to in-person preschool—it needed to prove television could deliver comparable outcomes at transformative cost. The evidence showed it could.

AI-generated content doesn’t need to prove personalization is pedagogically superior to standardization—it needs to prove professional production values can be maintained at transformative cost. Nina Harris’s assessment confirms they can.

The Cost Per Child

The mathematics become surreal when you calculate per-child costs:

Head Start: $7,600 per child per year

Sesame Street: $5 per child per year (broadcast model amortizing production costs across millions)

AI-generated video at current viewing numbers:

Even if only a fraction of viewers are children using it educationally, the per-child cost approaches rounding error.

But this comparison is misleading because it conflates distribution with production. The more meaningful comparison is production cost per unit of professional-quality content:

Traditional production: $75,000-$150,000 per 2-minute video

AI-enabled production: $5 per 2-minute video

Reduction: 15,000-30,000×

This is the capability that justified billions in investment over five decades becoming accessible to individuals. The expertise Nina Harris spent forty years developing—the ability to evaluate, manage, and execute professional media production—remains valuable. But the financial barrier that restricted that capability to institutions has collapsed.

What Changes

Here’s what you need to understand about economic barriers and personalization:

When customization costs $150,000 per variant, the question “Does personalization improve learning outcomes?” is academic. No family can afford to test the hypothesis. The economics force standardization regardless of pedagogical considerations.

When customization costs $5 per variant—less than the materials families already purchase for standardized curricula—the question changes. It’s no longer “Does personalization justify the enormous additional cost?” It becomes: “When customization costs essentially nothing, why wouldn’t you customize?”

The burden of proof inverts.

Sesame Street didn’t need to prove educational multimedia was universally superior to all alternatives. It needed to prove it could deliver measurable outcomes at costs that made population-scale deployment possible. The evidence showed it could: 0.29-0.41 standard deviation gains, 14 percent reduction in grade-level failure, effects persisting into adolescence, all delivered at $5 per child per year.

AI-generated educational content doesn’t need to prove personalization is universally superior to standardization. It needs to prove professional production values can be maintained at costs that make individual customization feasible. Nina Harris’s assessment confirms they can: production quality requiring $75,000-$150,000 through traditional methods now achievable for $5.

The pedagogical principles remain constant. Backward counting still engages prefrontal cortex development. 2 Hz rhythmic patterns still match optimal speech processing frequencies. Phonemic diversity still builds awareness. Narrative resolution still triggers emotional integration. Dual-channel processing still distributes cognitive load optimally.

What changed is who can implement these principles at professional quality.

The Infrastructure Question

The capability exists. The cost collapsed. The barrier fell.

But capability without infrastructure creates wilderness, not commons. Three point seven million homeschooling families can now theoretically create custom educational content. Whether they can create good custom educational content is a different question—and this is where Dr. Dabbagh’s expertise in learning systems design becomes critical.

What’s missing:

Template libraries with pedagogical annotations explaining which variables can be modified while preserving effectiveness. Not just “here’s a template,” but “this template optimizes for phonemic awareness—substitute animals carefully to maintain the /sp/ cluster and varied consonant sounds.”

Quality assessment tools providing computational analysis of custom versions. Check syllable count consistency. Verify phonemic diversity. Measure rhythm stability. Calculate visual coherence. Give creators a “pedagogical quality score” before deployment.

Evidence-based research determining which personalizations preserve or enhance learning outcomes, for which children, under what conditions. Does cat-obsessed children’s sustained attention to “Five Little Speckled Cats” compensate for any acoustic differences from the validated “frogs” version? We don’t know because the studies haven’t been run.

Community curation platforms where educators can share, rate, and collectively improve variants. Version control for educational content. Peer review for pedagogy. GitHub for learning.

Accessibility standards ensuring personalized content includes captions, audio descriptions, and accommodations for children with disabilities. Democratization that actually serves all children, not just those whose parents have technical literacy and time.

None of this infrastructure exists at scale. The creation tools raced ahead of the quality assurance systems. We’re generating custom content faster than we can validate whether it works.

This is the research gap that matters. Not “Does educational multimedia work?”—fifty years of evidence answers that definitively. But: “Do the specific customizations AI tools enable preserve the pedagogical benefits the research base established?”

The Uncontrolled Experiment

Whether we have infrastructure or not, the experiment is running.

Parents are generating custom educational content right now. Three point seven million homeschooling families, plus millions more in traditional schools exploring supplementary materials, plus the explosion of “AI tutors” and “personalized learning apps” flooding the market. Children are consuming this content. Their developing brains are being shaped by it.

We’re conducting a massive uncontrolled experiment on childhood development with no protocol, no measurement, no longitudinal tracking. The capability to generate content outpaced our ability to evaluate whether that content works.

You can call for studies. You can demand validation. You can insist on evidence before deployment.

But you’re too late. Deployment already happened. The tools are available. The content is being created. The children are watching.

The question isn’t whether we should run this experiment. The question is whether we’ll build measurement infrastructure to learn from the experiment we’re already running.

What Fifty Years Bought

The multi-billion dollar investment in educational multimedia from 1969 to 2025 bought us something specific: validated pedagogical principles implemented at professional production quality.

We know backward counting engages different neural pathways than forward counting. We know 2 Hz rhythmic patterns optimize speech processing. We know phonemic diversity builds awareness. We know narrative resolution triggers emotional integration. We know dual-channel processing distributes cognitive load. We know these things because we spent billions learning them.

That capability—evidence-based multimedia instruction at professional quality—cost $5 per child per year when delivered through broadcast infrastructure requiring institutional backing.

It now costs $5 total when produced through AI tools accessible to individuals.

The cost didn’t collapse because the pedagogy got easier or the production values got lower. The cost collapsed because the tools that implement validated principles at professional quality became democratically accessible.

This is either the democratization of capability or the fragmentation of quality control. Possibly both simultaneously.

What it isn’t is subtle. A 15,000-to-30,000-times cost reduction while maintaining professional quality isn’t incremental improvement. It’s the economic foundation of an entire industry becoming irrelevant overnight.

Dr. Dabbagh was right to question whether I’d demonstrated personalized learning. I hadn’t. What I’d demonstrated was that the barrier preventing personalized learning from being economically feasible just disappeared.

That’s a different claim. But it might be more important.

<iframe data-testid=”embed-iframe” style=”border-radius:12px” src=”

width=”100%” height=”352” frameBorder=”0” allowfullscreen=”“ allow=”autoplay; clipboard-write; encrypted-media; fullscreen; picture-in-picture” loading=”lazy”></iframe>

<iframe width=”560” height=”315” src=”

title=”YouTube video player” frameborder=”0” allow=”accelerometer; autoplay; clipboard-write; encrypted-media; gyroscope; picture-in-picture; web-share” referrerpolicy=”strict-origin-when-cross-origin” allowfullscreen></iframe>